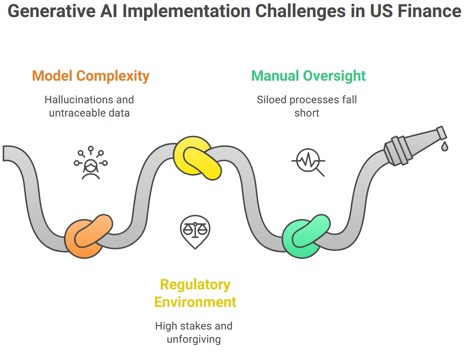

The promise of generative AI is everywhere, but for leaders in US financial services, the reality is far more complicated. While the C-suite is setting bold policies for responsible AI use, the technology teams are struggling to translate those abstract principles into a tangible reality. The gap between a well-intentioned governance document and a scalable, auditable system is where risk lives.

This isn’t a theoretical problem; it’s a high-stakes challenge amplified by an unforgiving US regulatory environment. The AI models are complex, they can “hallucinate” or produce fabricated output, and their reliance on vast, often untraceable, data sets create immense legal and reputational risk. The old ways of managing this risk by relying solely on manual oversight and complex, siloed processes, simply falls short.

This is the very problem our platform, iTuring.ai, was built to solve. We believe that robust governance isn’t a checklist; it’s a built-in feature of your AI infrastructure.

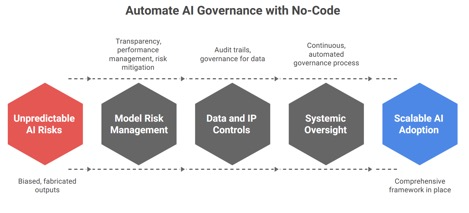

From Manual Oversight to Automated Management

The traditional approach to AI governance often involves a top-down oversight committee and a series of manual checks. This may have worked for a handful of simple AI models, but it’s not scalable when you have many AI models across the enterprise and many of those models have multi-layered complexity.

Our no-code platform provides the critical bridge between the governance policies and their practical application. It shifts the burden from a single committee to an automated system that can monitor, audit, and manage your entire AI portfolio in real-time. This dynamic oversight ensures that as your AI ecosystem grows, your governance capabilities keep pace.

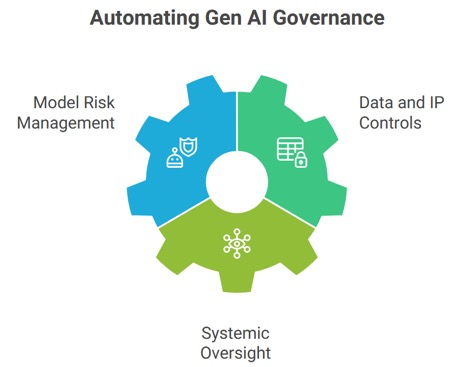

Automating the Core Pillars of Gen AI Governance

A bank’s AI governance framework address areas related to risk, data, and compliance. Here’s how a no-code platform can automate these functions, making your policies actionable:

Model Risk Management (MRM)

The most significant risk with generative AI is its unpredictability. Models can produce biased or fabricated outputs, creating legal and ethical landmines. Our no-code platform provides built-in Model Risk Management and Explainable AI capabilities that address these challenges head-on. It provides transparency to see how a model arrived at a decision and the tools to manage its performance and mitigate risks.

Data and IP Controls

Generative AI models are trained on massive datasets, creating complex legal liabilities around data privacy and intellectual property. Manually tracking the origin and use of every data point is impossible. Our platform automates these controls by establishing clear audit trails and governance for every piece of data used in model development. It gives you the ability to ensure data is used correctly, reducing the risk of a lawsuit or a regulatory penalty.

Systemic Oversight

A single committee cannot oversee every AI initiative across a large organization. By embedding governance directly into the platform, the bank can create a system where oversight isn’t an occasional event, it’s a continuous, automated process. This facilitates AI adoption across the enterprise with confidence, knowing that a comprehensive framework is in place to manage everything from a simple predictive model to a high-stakes generative AI application.

The Final Piece of the Puzzle

For too long, the conversation around automating AI governance has been stuck in the boardroom. It’s time to bring that vision to life. iTuring.ai no-code platform not only makes model development faster and easier, but also enables embedding of the high-level governance policies into the model development process thus ensuring that the processes and practices are auditable, scalable, and effective.

It’s the final piece of the puzzle that allows a bank to unlock the power of ML models and generative AI and be confident about ensuring its AI governance policies is being executed diligently across the enterprise.