Artificial intelligence is no longer a futuristic concept; it is an integral part of the financial services industry, powering everything from fraud detection to loan approvals. While the efficiency and speed of AI are undeniable, the complex, opaque nature of many AI models, often referred to as the “black box”, has created significant challenges. For financial institutions, this lack of transparency is not just a technical issue; it’s a critical business risk that can lead to regulatory penalties, financial losses, and a loss of stakeholder trust. This is why Explainable AI (Explainable AI) is no longer a luxury but a fundamental requirement.

The urgency for Explainable AI in financial services is driven by several key factors:

● Regulatory Scrutiny: Regulatory bodies worldwide, including the OCC in the US, the Reserve Bank in India are increasingly scrutinizing AI models. They require financial institutions to demonstrate how their models arrive at a decision, especially for high-stakes actions like loan denials. Regulations like the Equal Credit Opportunity Act (ECOA) and the Fair Credit Reporting Act (FCRA) mandate that consumers have the right to understand why a decision was made, and without explainability, compliance is nearly impossible.

● Mitigating Bias and Ensuring Fairness: The “black box” can inadvertently perpetuate or even amplify existing biases in data, leading to discriminatory outcomes based on protected characteristics like race or gender. Explainable AI provides the tools to detect and mitigate these biases proactively, ensuring fairness and accountability throughout the model’s lifecycle.

● Building Trust with Stakeholders: From customers to auditors, everyone needs to trust the AI models in use. When a loan is denied or a transaction is flagged for fraud, customers expect a clear explanation. Explainable AI empowers financial institutions to provide meaningful, human-understandable reasons, which builds confidence and strengthens customer relationships, even when delivering unfavorable news.

Shining a Light on the “Black Box” with iTuring.ai’s Explainable AI Capabilities

At iTuring.ai, we believe that transparency is the foundation of trustworthy AI. Our platform is designed to make every step of the AI lifecycle explainable and auditable. We’ve moved past the traditional “black box” approach by building in transparency and accountability from the ground up, not as an afterthought.

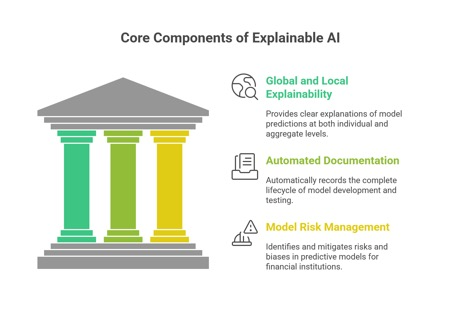

Our Explainable AI capabilities provide:

● Global and Local Explainability: We offer clear explanations of model predictions at both the individual customer level and the aggregate model level. This means a loan officer can understand why a specific applicant was denied, while a compliance officer can get a high-level overview of the model’s overall behavior.

● Automated, Audit-Ready Documentation: Our platform automatically records the complete lifecycle of model development, validation, and testing. This provides a transparent and auditable trail of every step in the process, so you don’t have to scramble to prepare for an audit, the documentation is always ready.

● Model Risk Management: This is a crucial area where our Explainable AI capabilities truly shine. Our Model Risk Management (MRM) framework is designed exclusively for financial institutions to identify, assess, and mitigate the risks and biases inherent in predictive models. It provides early warning indicators for potential model failures or errors, helping to prevent financial losses and regulatory action.

The Future is Transparent

The financial services industry is at an inflection point. The move towards AI is unstoppable, but the path forward must be guided by responsibility. Relying on opaque AI models is a ticking time bomb of regulatory fines and lost trust. By embracing Explainable AI, financial institutions can unlock the full potential of their data while confidently navigating the complex regulatory landscape.

iTuring.ai is at the forefront of this movement, providing the tools to build, deploy, and govern AI models with complete transparency. This is not just about meeting a regulatory requirement; it’s about building a future where AI and human intelligence work together, transparently and ethically, to drive business success.