TL;DR

- CFPB has confirmed existing laws apply fully to AI

- AI-generated communications carry identical obligations to human ones

- Model governance is now an active CFPB examination priority

- Institutions must document, explain, and audit every AI decision

- Ungoverned AI in collections creates compounding, quantifiable legal exposure

Think of a factory that installs new automated machinery but keeps filing the same safety inspection reports it wrote for the manual line. The machines are faster, the output is larger, and the floor looks nothing like it did. But the documentation still says “operated by hand.” When the inspector arrives, the gap between what the reports describe and what the floor actually does is the violation.

This is the compliance posture many collections operations are carrying into CFPB examinations in 2026. AI is running the outreach, scoring the accounts, and triggering the escalations. But the governance documentation was written for a different era. The examiner does not ask how fast your models run. They ask whether you can prove, decision by decision, that every automated action was lawful.

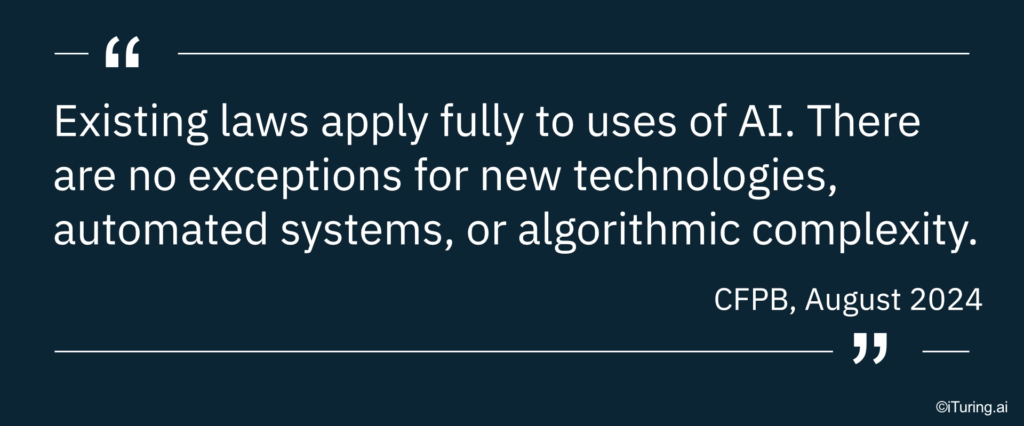

What the CFPB Has Actually Said About AI

The CFPB has not introduced sweeping new AI regulations. What it has done is progressively, and very deliberately, clarified that every existing consumer financial protection law applies to AI without exception.

Here is the documented record in sequence:

- August 2022: CFPB confirmed that ECOA adverse action obligations apply fully to algorithmic and AI-based models, not just human decisions

- September 2023: Circular 2023-03 confirmed that creditors cannot satisfy adverse action notice requirements by pointing to generic bucket reasons — explanations must reflect actual model reasoning

- July 2024: CFPB issued a rule on AI use in mortgage lending, reinforcing the same explainability and governance standard across product lines

- August 2024: In its formal response to the Treasury Department’s RFI on AI, the CFPB stated plainly that “existing laws apply fully to uses of AI” and identified collections, fraud models, and automated customer service as specific compliance risk areas

- March 2026: CFPB debt collection rules update confirmed that AI decisioning, data privacy, and automated communications remain active areas of regulatory focus

The pattern is consistent. No AI carve-outs. No grace period for complexity. The institution is responsible for what the model does.

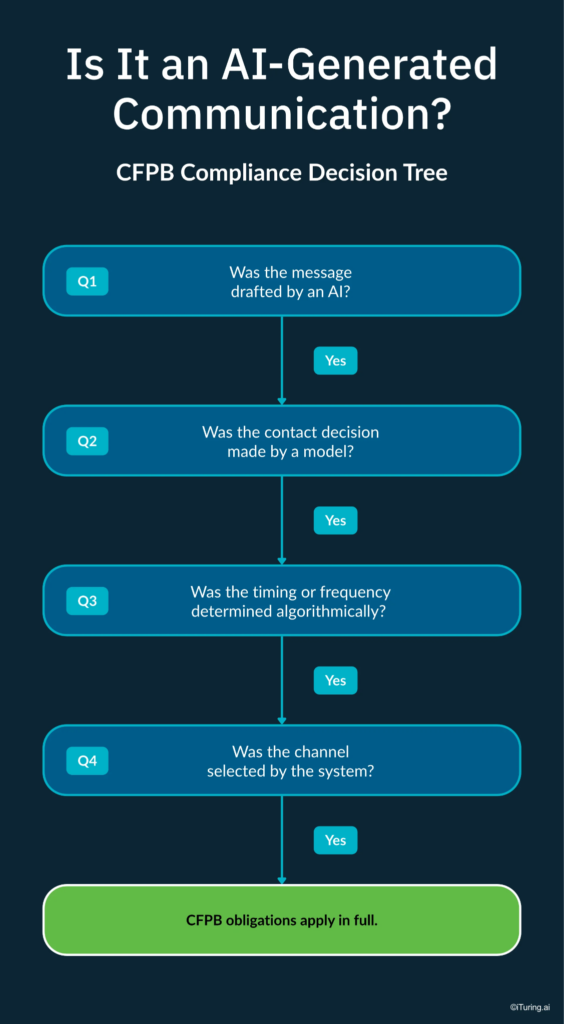

What “AI-Generated Communications” Means Under Enforcement

A common assumption is that CFPB scrutiny of AI in collections focuses on the big decisions: credit denials, account closures, charge-offs. In practice, the enforcement risk is much broader.

Under the CFPB’s framework, an AI-generated communication includes:

- AI-drafted SMS messages and emails sent through automated workflow triggers

- AI voice agents conducting live outreach calls without a human on the line

- Automated scoring decisions that escalate an account to a higher-intensity collections queue

- GenAI-produced letters or notices generated without individual human review per send

- Chatbot interactions where a consumer invokes a statutory right (like disputing a debt) and the system fails to register or honor it

The CFPB does not draw a distinction between a human agent placing a collections call and an AI system doing the same thing. The obligation to disclose who is calling, the obligation not to harass, the obligation to honor opt-outs, and the obligation to respect dispute requests are all identical.

The Three Enforcement Triggers to Watch in 2026

Based on the CFPB’s published guidance and the trajectory of its supervisory priorities, three specific areas carry the highest enforcement risk for AI-driven collections operations this year.

Explainability gaps

When a model flags an account for collections action and the institution cannot produce a specific, accurate explanation of why, it triggers adverse action notice violations under ECOA and Regulation B. The CFPB made this explicit in Circular 2023-03: broad category reasons that do not reflect actual model reasoning are not compliant. Per Skadden’s analysis of the CFPB’s 2024 Treasury response, regulators will continue to scrutinize “complex models” with a focus on whether institutions can produce decision-level explanations for individual consumers.

Fair lending exposure

AI models trained on historical collections data learn from past outcomes. If those past outcomes were shaped by discriminatory practices, the model learns and perpetuates that pattern. The CFPB has explicitly stated that fair lending testing protocols must cover post-origination models including collections, not just underwriting. Courts have already held that using an algorithmic tool that produces disparate impact can itself constitute a fair lending violation, even if the intent was neutral.

Governance documentation gaps

An institution that cannot produce model lineage, approval records, bias audit evidence, and drift monitoring logs during examination is not just administratively unprepared. The CFPB increasingly treats the absence of governance documentation as evidence of structural non-compliance. Having the model in production is not the standard. Being able to prove, in real time, that the model operated within lawful parameters from day one is.

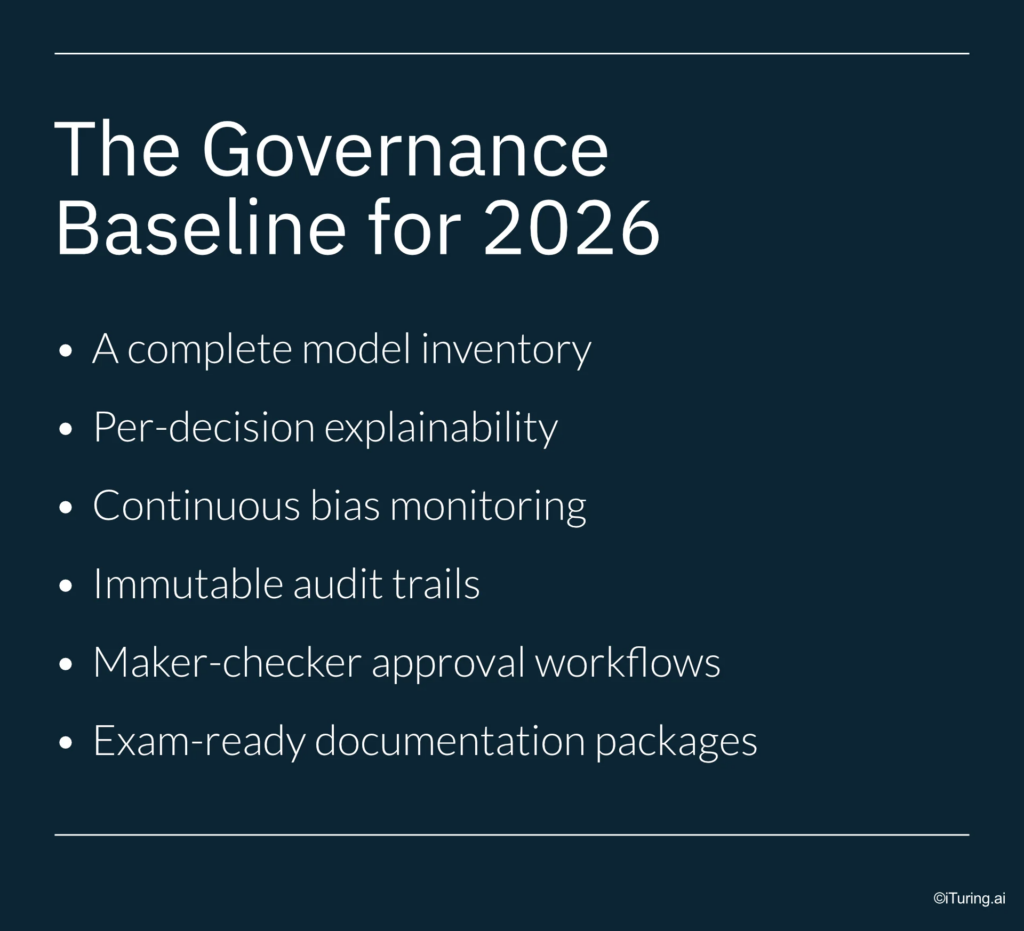

What AI Governance in Collections Actually Requires

Understanding the CFPB’s position is one thing. Translating it into an operational governance structure is another. Here is what a genuinely compliant AI governance framework for collections looks like:

- A complete model inventory that covers every model touching a collections workflow — proprietary models, vendor models, third-party scoring tools, and GenAI components alike

- Per-decision explainability using techniques like SHAP or LIME that generate account-level reasons, not aggregate summaries of model behavior

- Continuous bias monitoring that runs post-deployment, not just at model build time — because data distributions shift and bias can emerge in production even in a model that tested clean

- Immutable audit trails with precise timestamps for every automated outreach attempt, every scoring decision, every escalation trigger, and every opt-out registration

- Maker-checker approval workflows requiring documented human sign-off before any new model, or any change to an existing model, goes into production

- Exam-ready documentation packages that compile full model lineage, approval history, explainability evidence, and bias audit records into a reviewable package, producible within hours, not weeks

The Cost of Getting This Wrong

The numbers clarify what is at stake.

FDCPA statutory damages run to $1,000 per consumer per action, plus actual damages and attorney fees. TCPA exposure for non-compliant automated calls or messages sits at $500 to $1,500 per contact. An AI system making the same non-compliant decision across thousands of accounts does not produce one violation. It produces a class-action-ready portfolio of them.

Beyond statutory penalties, CFPB enforcement actions can result in consent orders that constrain an institution’s operational autonomy for years — requiring pre-approval for new model deployments, mandatory third-party audits, and public disclosure of findings. The reputational cost of that outcome typically exceeds the statutory cost by a significant multiple.

The institutions that will navigate the current supervisory environment without incident are not the ones with the most sophisticated AI. They are the ones with the most governed AI.

How iTuring Approaches This Problem

iTuring was purpose-built for regulated industries, which means the governance infrastructure described above is not a separate compliance project running alongside the AI. It runs inside it.

Every model deployed through the iTuring platform carries:

- SHAP and LIME explainability at the individual prediction level, not just the aggregate

- Immutable audit trails with timestamps for every model action and outreach trigger

- Maker-checker approval workflows with full documentation before any model goes live

- Continuous monitoring across 60 parameters including drift, bias, and performance thresholds

- One-click exam documentation that compiles complete model lineage, approval history, and explainability records into an examiner-ready package

For collections teams operating in the current regulatory environment, the question is no longer whether to govern AI. It is whether the governance runs inside the model or gets bolted on after the fact.

Book a discovery session with iTuring to see what embedded AI governance looks like in a live collections environment.