TL;DR

- RBI Digital Lending Directions 2025 issued on May 8, 2025

- NBFCs remain fully liable for every LSP-run AI collections action

- Borrower consent must be verified before every AI outreach attempt

- Collection calls are restricted to 8 AM to 7 PM, no exceptions

- Scale-Based Regulation determines governance depth per NBFC tier

Think of a highway where the lanes were repainted overnight. The road is the same. The vehicles are the same. But the rules for how to drive it were redrawn while most drivers were asleep. The NBFCs that notice the new markings and adjust their driving are building operational advantage. The ones still following the old lane lines are accumulating regulatory risk with every kilometre they cover.

India’s NBFC sector found itself in exactly this position on May 8, 2025. The RBI’s Digital Lending Directions 2025 did not ban AI in collections, restrict NBFC lending activity, or introduce punitive new penalties out of nowhere. What the Directions did was redraw the lane markings across the full loan lifecycle, extending regulatory expectations that previously focused on origination all the way through to recovery. New requirements on consent, disclosure, LSP accountability, data handling, and AI governance now apply to every collections interaction an NBFC touches, whether the outreach is made by a human agent or an automated system.

This blog explains what changed, why it matters specifically for AI-driven collections, and what a compliant NBFC AI collections operation looks like in 2026.

What Changed on May 8, 2025

The RBI Digital Lending Directions 2025 replaced the September 2022 Digital Lending Guidelines and the November 2023 DLG Directions. The consolidation was not just administrative. Several provisions were materially strengthened, and the scope of the framework was explicitly extended to cover post-disbursement loan management including recovery.

The key changes relevant to AI collections are as follows:

- Scope of digital lending now covers recovery: The 2025 Directions define digital lending to include the management and recovery of digital loans, not just their origination. This brings AI collections platforms directly within the regulatory perimeter in a way that earlier language did not make explicit.

- LSP accountability was tightened significantly: Regulated Entities must define roles clearly in contracts with Lending Service Providers, conduct regular audits of LSP conduct, and remain directly responsible for every action an LSP takes on their behalf during collections. There is no shared or transferred liability under the 2025 framework.

- CIMS registration became mandatory: All Digital Lending Apps, including AI collections platforms that qualify as DLAs under the Directions, must be registered with RBI’s Centralised Information Management System. The deadline for existing apps was June 15, 2025.

- Explicit, purpose-specific consent is now required: The 2025 Directions require NBFCs and their LSPs to obtain borrower consent that is specific to the purpose for which data is being accessed or the channel through which communication is being initiated. Blanket consent collected at origination is not sufficient for all downstream collections activity.

- Data localisation applies to AI platforms: All borrower data used by AI collections systems must be stored in India-based data centres. Cloud-based AI platforms with data infrastructure outside India are non-compliant with this requirement regardless of their other capabilities.

- Key Fact Statement requirements were strengthened: NBFCs must provide a standardised KFS disclosure of all loan terms, charges, and recovery rights before disbursement. This is relevant to collections because it sets the borrower’s documented expectations against which recovery conduct is measured.

The Scale-Based Regulation Layer

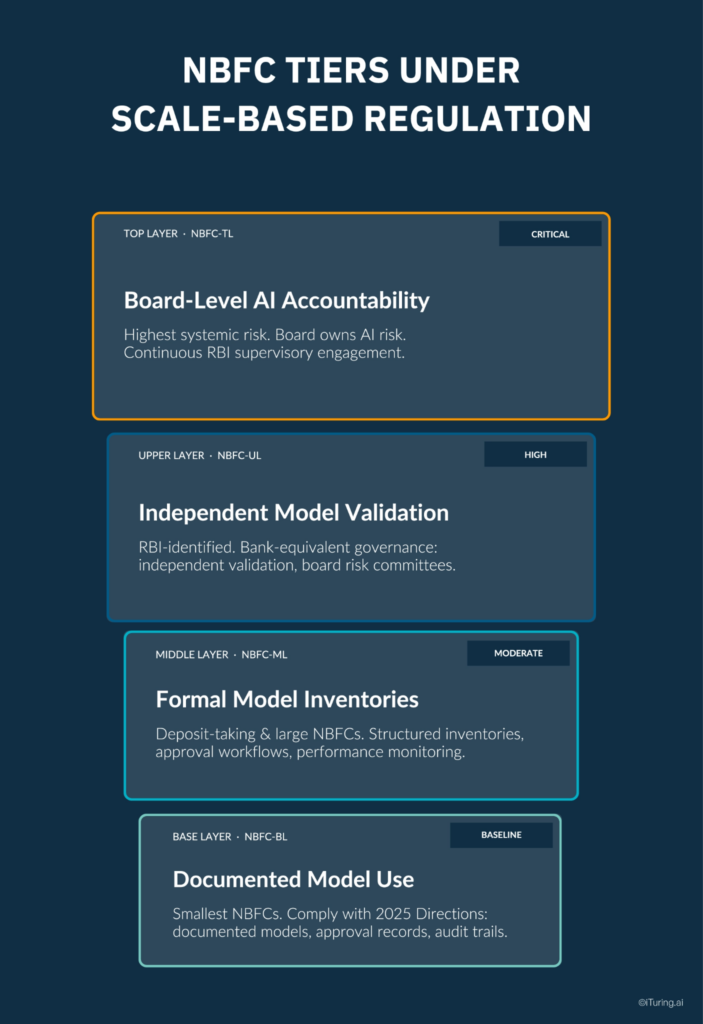

The 2025 Digital Lending Directions apply to all NBFCs. But the depth of AI governance infrastructure an NBFC must maintain is also shaped by RBI’s Scale-Based Regulation framework, which classifies NBFCs into four tiers based on size, complexity, and systemic risk.

Understanding which tier your NBFC sits in determines how comprehensively you need to build AI governance infrastructure, particularly for collections models.

Base Layer (NBFC-BL): Smallest NBFCs by asset size. Must comply fully with the 2025 Directions and Fair Practices Code. AI governance requirements are baseline: documented model use, basic approval records, and audit trails for collection interactions.

Middle Layer (NBFC-ML): Deposit-taking NBFCs and larger non-deposit-taking NBFCs. Must maintain formal model inventories covering all models used in credit and collections decisions. Approval workflows and ongoing performance monitoring are expected.

Upper Layer (NBFC-UL): NBFCs specifically identified by RBI based on size and systemic importance. Governance requirements are comparable to commercial banks. Independent model validation functions, board-level risk committees, and structured model risk management frameworks are expected for AI systems.

Top Layer (NBFC-TL): Reserved for NBFCs that pose the highest systemic risk. Board-level accountability for AI risk management, the most rigorous examination standards, and continuous engagement with RBI supervision.

For AI collections specifically, Middle Layer and above NBFCs are expected to demonstrate that every model touching a loan recovery decision has been formally validated, approved through a documented workflow, and monitored continuously for performance drift and bias. The examination standard for Upper Layer NBFCs means that a collections AI model that cannot produce its approval history, validation evidence, and drift monitoring records is a governance gap, not just an administrative one.

What the Rules Require at the Collections Call Level

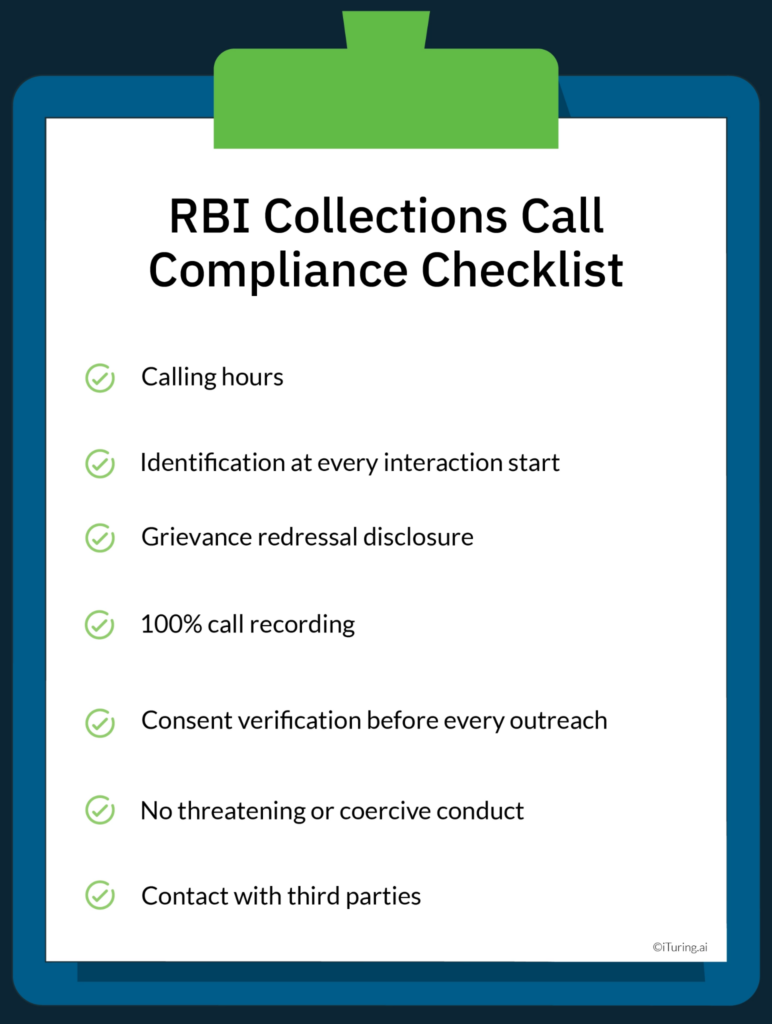

The RBI’s Fair Practices Code for NBFCs, read together with the 2025 Digital Lending Directions, creates a specific set of operational requirements for every AI-initiated collection interaction. These are not aspirational guidelines. They are enforceable obligations with documented penalty histories.

Calling hours: All collection communications, including AI-initiated calls, SMS, and digital messages, are permitted only between 8 AM and 7 PM in the borrower’s local time zone. This restriction applies regardless of the technology used to make the contact. An AI dialer that contacts a borrower at 7:30 AM is in violation with exactly the same consequences as a human agent making the same call.

Identification at every interaction start: Every AI-initiated collection call must state, at the outset, the name of the regulated entity on whose behalf the call is being made, the loan reference number, and the nature of the call. The borrower must know who is calling and why before any collection dialogue begins. The Lending Service Provider’s name alone is not sufficient identification.

Grievance redressal disclosure: Borrowers must be informed of the regulated entity’s grievance redressal mechanism during every collection interaction. For AI systems, this means the grievance disclosure must be a mandatory, non-skippable element of every call flow. A call that proceeds to the collection conversation without completing this disclosure is non-compliant regardless of how the collection itself was conducted.

100% call recording: The Fair Practices Code mandates recording of all collection communications. For AI systems, this must be architected at the infrastructure level, not left to a campaign configuration option. Every interaction, including unanswered calls, partial connections, and dropped calls, must generate a record.

Consent verification before every outreach: The 2025 Directions require that consent be verified before initiating any digital communication channel. An AI system must check the borrower’s current consent status, across every channel being used, before each outreach attempt. A borrower who has opted out of WhatsApp contact must not receive a WhatsApp message even if they remain reachable by voice call.

No threatening or coercive conduct: The Fair Practices Code prohibits the use of threatening, abusive, or harassing language during collection interactions. For AI systems, this requirement is typically satisfied through pre-approved, compliance-reviewed conversation scripts that the AI cannot deviate from. The model must also be capable of detecting escalating tension and de-escalating or terminating an interaction rather than continuing in a direction that could be characterised as harassment.

Contact with third parties: AI systems must not contact unauthorised third parties about a borrower’s debt. This means the platform must have controls preventing outreach to anyone other than the borrower, their attorney, or individuals the borrower has specifically authorised to discuss the account.

The LSP Accountability Gap Most NBFCs Underestimate

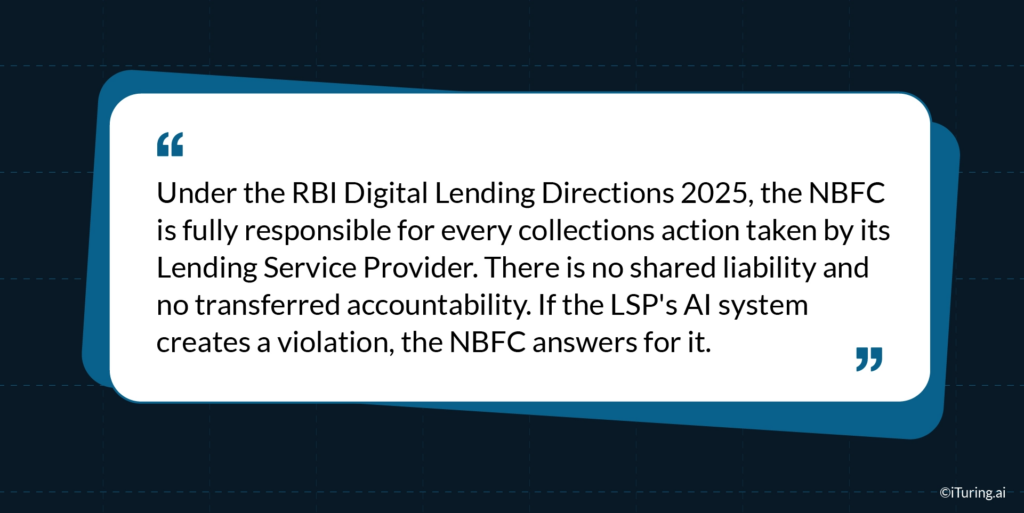

One of the most significant operational implications of the 2025 Directions is the complete absence of shared or transferred liability for LSP conduct. This point deserves precise attention because many NBFCs continue to operate as though deploying a third-party AI collections platform reduces their regulatory exposure.

Under the 2025 framework, the NBFC is the Regulated Entity. Every action taken by an LSP on the NBFC’s behalf during collections is, for regulatory purposes, the NBFC’s action. If an AI collections platform deployed by an LSP calls a borrower at 7 AM, the NBFC receives the regulatory consequence. If the LSP’s AI system fails to verify consent before outreach, the NBFC bears the liability. If the platform’s audit trails are incomplete during an RBI inspection, the documentation gap belongs to the NBFC.

This fundamentally changes how NBFCs should evaluate AI collections vendors. The relevant evaluation questions are no longer limited to recovery performance, cost per contact, or right-party contact rates. They must also include:

- Does the vendor’s platform produce immutable, borrower-level audit trails for every interaction that can be presented to RBI on demand?

- Does the platform enforce calling hours, consent verification, and contact frequency limits at the infrastructure level, not at the campaign configuration level?

- Does the NBFC retain contractual audit rights over the vendor’s platform and data infrastructure?

- Is the platform’s data infrastructure hosted in India-based data centres in compliance with the localisation requirement?

- Can the platform produce documentation sufficient for RBI examination without requiring weeks of manual compilation?

An AI collections vendor that cannot answer all of these questions affirmatively is transferring compliance risk to the NBFC, not absorbing it.

Data Protection Under the DPDP Act 2023

The Digital Personal Data Protection Act 2023 operates in parallel with the RBI Directions, and the two frameworks together create a layered compliance obligation for any AI collections system that processes borrower data.

Under the DPDP Act, borrowers are “data principals” with specific rights over their personal data. The following rights are directly relevant to AI collections operations:

- Right to access: A borrower can request a summary of the personal data being processed about them by the AI system.

- Right to correction and erasure: If a borrower disputes the accuracy of data the AI is using to make collection decisions, the system must support a correction workflow. Data cannot simply be retained and acted upon if its accuracy is contested.

- Right to grievance redressal: Borrowers must have a mechanism to raise complaints about how their data is being handled during collections, with mandated resolution timelines.

- Right to withdraw consent: Where data processing is based on consent, borrowers can withdraw it. AI systems must be capable of acting on withdrawal in real time, removing the borrower from active outreach immediately upon consent withdrawal.

Beyond individual rights, the DPDP Act imposes structural obligations on AI collections platforms:

- Data used for collections must have a documented lawful basis, either the loan agreement as a contractual necessity or explicit consent for specific processing purposes.

- Borrower data collected during loan origination cannot be repurposed for AI-driven collections scoring without a separate legal basis or fresh consent. The purpose for which data was originally collected constrains the purposes for which it can be used downstream.

- Retention limits require that data used in AI collections models not be held beyond the period necessary for the stated purpose. Call recordings, behavioral signals, and scoring inputs must be subject to defined retention policies with automated purging.

- The principle of data minimisation means AI systems should access and process only the data fields genuinely required for the specific collection interaction, not a borrower’s full data profile indiscriminately.

For NBFCs evaluating AI collections platforms, DPDP Act compliance requires the vendor to demonstrate field-level access controls, automated retention schedules with provable deletion, processing activity logs, and the technical capability to support data principal requests within the timelines the Act mandates.

What NPA Recovery AI Must Demonstrate Under Examination

RBI examinations of NBFCs with AI collections deployments are focused on documentation, governance, and evidence rather than on theoretical compliance frameworks. The question an examiner asks is not whether the NBFC has the right policies. The question is whether the NBFC can prove, for any given borrower interaction, that the AI operated within the regulatory boundaries.

An NPA recovery AI platform that can withstand RBI examination must be capable of producing the following on demand:

- A complete, timestamped audit trail for every collection communication attempt, including AI-initiated voice calls, SMS, digital messages, and failed or unanswered contact attempts, with channel identification for each

- Proof that consent was verified before each outreach attempt, logged per borrower per channel, with a timestamp for the verification

- Model performance records showing NPA recovery rates over time, false positive rates on risk scoring, and the history of any model updates or retraining events

- Drift monitoring records demonstrating that the model’s performance has been tracked continuously since deployment and that any material shift was identified and addressed

- LSP contract documentation confirming the NBFC’s audit rights over the platform and the NBFC’s oversight process

- Grievance redressal logs showing that borrower complaints were received, routed, and resolved within the required timelines

- Data localisation compliance evidence confirming that all borrower data processed by the platform is stored and managed within India

These are not documentation items that can be constructed after an examination notice arrives. They must be produced by the platform as a routine output of normal operations, or the NBFC faces a documentation gap that is treated as a governance failure.

The Scale of the Compliance Risk

RBI levied Rs 48 crore in aggregate penalties on NBFCs for collection-related Fair Practices Code violations in FY 2024-25 alone. Individual penalties for FPC violations range from Rs 5 lakh to Rs 2 crore per instance, with repeat offenders facing the possibility of license-level action.

Beyond direct penalties, the operational cost of a regulatory show-cause notice is significant. Management attention is diverted for months. Third-party audits are often mandated as a remediation condition. In cases where violations affected large borrower populations, consumer protection proceedings can follow separately.

The risk calculus for AI collections is not just whether the platform performs well on recovery metrics. Every interaction the AI initiates on behalf of the NBFC is a potential regulatory event. A platform making hundreds of thousands of outreach attempts per month that operates without adequate compliance infrastructure is generating a corresponding volume of regulatory exposure.

Building NBFC AI Collections Governance That Works

The most effective approach to RBI compliance for AI collections is to treat governance as a design requirement for every model and platform, not as a review layer added after deployment.

For NBFC leadership evaluating or upgrading AI collections infrastructure, the following elements represent the baseline governance standard for 2026:

- A model inventory covering every AI model and scoring system that touches a loan recovery decision, with documented approval history and validation evidence for each

- Calling hour enforcement built at the infrastructure level, with time-zone-aware hard restrictions that cannot be overridden by campaign configuration

- Per-borrower consent verification that runs before each outreach attempt, with a logged record of the result

- Contact frequency tracking that operates across all channels simultaneously, enforcing aggregate limits rather than per-channel limits that can be gamed in combination

- Mandatory disclosure checkpoints in every call flow that cannot be bypassed, covering regulated entity identity, loan reference, and grievance mechanism

- Immutable audit trail generation for every interaction, stored in India-based infrastructure, accessible within seconds rather than requiring manual compilation

- Continuous model performance monitoring with alerts for drift and bias, operating post-deployment rather than only at model build time

- LSP contracts that explicitly provide the NBFC with audit rights over the vendor’s platform and compliance documentation

How iTuring Approaches This

iTuring was built for regulated industries, which means the compliance infrastructure described above is built into the platform rather than assembled from separate tools or maintained through manual review processes.

For NBFCs deploying AI collections through iTuring, governance operates at the model level. Every model carries SHAP and LIME explainability at the individual prediction level. Immutable audit trails are generated for every model action. Maker-checker approval workflows require documented human sign-off before any model goes into production or is updated. Continuous monitoring across 60 parameters covers performance, drift, and bias, with alerts triggered before a problem reaches a regulatory examination.

The platform supports the documentation requirements that RBI examinations demand: complete model lineage from data to decision, approval history, explainability records, and monitoring logs, all compilable into an examination-ready package within hours.

For Middle Layer and Upper Layer NBFCs that face the most structured governance expectations under Scale-Based Regulation, this means the AI collections governance infrastructure and the model risk management infrastructure are the same system, not separate compliance projects running in parallel.

Book a discovery session with iTuring to understand how the platform’s governance layer maps to RBI’s 2025 requirements for your specific NBFC tier.