TL;DR

- ECOA adverse action obligations apply fully to all AI decisions

- Generic checklist reasons do not satisfy CFPB adverse action standards

- AI models must produce specific, per-decision explanations for each outcome

- Nontraditional data signals in AI models create heightened disclosure risk

- Bona fide error defense requires documented, proactive compliance procedures

We have all seen a vending machine, the one that takes your payment, rejects your selection, and gives you nothing in return. No receipt. No error message. No explanation. The transaction happened at machine speed and the record of it exists somewhere in a server log you will never see. Now place that machine in the middle of a consumer’s financial life. The machine decided whether their account gets restructured, their credit limit gets cut, or their debt gets escalated to intensive recovery. The decision happened in milliseconds. The legal obligation to explain it does not disappear because a machine made the choice.

This is the compliance gap that many collections operations have not fully closed in 2026. AI systems are making decisions about consumers at a scale and speed that human agents never could. But the obligation to disclose the reasons for those decisions, in specific and accurate terms, applies with exactly the same force as it did when a human underwriter made them by hand. Getting this wrong does not just create regulatory risk. It creates a record of non-compliance that compounds with every automated decision the model makes.

What Adverse Action Means in a Collections Context

Adverse action notices are widely understood as a credit underwriting concern. Many collections teams operate on the assumption that their obligations begin and end with contact rules. That assumption creates real exposure.

Under ECOA and Regulation B (12 C.F.R. Part 1002), an adverse action includes any unfavorable change to the terms and conditions of an existing credit account, not just outright application denials. The following collections decisions fall squarely within the adverse action framework:

- Reducing a consumer’s credit limit during a collections review

- Changing repayment terms on a delinquent account

- Refusing to restructure a delinquent account at the consumer’s request

- Closing an account based on AI-scored risk assessment

- Routing a consumer to a materially more intensive collections treatment based on a model score

The FCRA adds a parallel obligation. When a consumer reporting agency’s data contributed to the decision, a separate adverse action notice disclosing the CRA’s name, contact information, and the key factors from the credit score is also required. An AI model that ingests bureau data as one of its inputs, which describes the majority of collections scoring models, will frequently trigger both requirements simultaneously.

What the CFPB Has Required Since 2022

The CFPB has published two circulars that set the compliance standard for AI-driven adverse action notices. Neither has been rescinded and both remain in force.

CFPB Circular 2022-03 established the foundational principle: ECOA and Regulation B do not permit creditors to use technology for which they cannot provide accurate and specific reasons for adverse actions. The complexity of an AI model is not a defense. If the model cannot produce reasons that satisfy the legal standard, the institution must not use it in a way that triggers adverse action obligations without first building that capability.

CFPB Circular 2023-03 addressed the sample forms question directly. Regulation B includes model adverse action forms with suggested reason categories. The CFPB confirmed in 2023 that relying on those forms only satisfies the requirement if the reasons disclosed are specific and accurate for the individual case. A creditor that selects the closest matching reason from the checklist, when that reason does not accurately reflect what the model actually did, is not compliant regardless of how closely the selected reason resembles the correct one.

Skadden’s analysis of Circular 2023-03 identified a specific example the CFPB provided: if a complex algorithm denies credit because of an applicant’s chosen profession, stating “insufficient projected income” as the reason would likely not satisfy the disclosure obligation. The reason disclosed must describe the actual factor the model used, even if that factor is non-intuitive by traditional underwriting standards.

The Nontraditional Data Problem

One of the most significant compliance risks in AI collections models is the use of nontraditional or behavioral data signals, precisely because these signals are the ones most likely to produce adverse action notices that cannot be accurately described with standard language.

The CFPB’s Circular 2023-03 addressed this directly. When a creditor lowers a consumer’s credit limit or closes an account based on behavioral data, such as the type of establishment at which a consumer shops, the type of goods purchased, or purchasing location, stating “purchasing history” as the reason is insufficient. The institution must disclose more specific details about the actual behavioral data that drove the decision.

The CFPB also flagged “consumer surveillance” data as an elevated risk category. This includes data harvested outside of a consumer’s credit file or loan application, fed into an algorithmic model as a predictive signal. The concern is two-directional: first, that consumers would not anticipate these factors as relevant to their financial treatment; second, that such factors may carry fair lending risk if they correlate with protected class membership.

For collections teams, this creates a practical pre-deployment checklist item. Every nontraditional data signal used in a collections AI model must be mappable to a plain-language, consumer-understandable disclosure that is specific enough to satisfy the CFPB’s standard before that model goes into production.

The Bona Fide Error Defense and What It Demands

The FDCPA’s bona fide error defense provides a potential shield from liability for violations that were unintentional and occurred despite procedures maintained to avoid them. The operative word is “maintained.” The defense requires documented, proactive compliance infrastructure, not a retroactive account of good intentions after a violation has been identified.

For AI collections operations, maintaining the bona fide error defense in practice requires:

- Version-controlled records of every model that went into production, including the approval documentation and compliance review completed before deployment

- Time-stamped audit logs for every automated consumer interaction, showing which model version produced which decision at which moment

- Documented mapping of every model input variable to a corresponding adverse action reason, validated for specificity before deployment

- Evidence of ongoing monitoring that would catch a newly emerging compliance gap, such as a model drift that changes which variables are driving decisions

An institution that can produce this documentation when a violation is alleged has a credible defense. An institution that cannot is exposed regardless of intent. The AI model’s speed and scale work against the institution in a litigation or examination context if the records to support the defense were never built.

Building Adverse Action Compliance Into AI Collections Models

Adverse action compliance in an AI collections environment is an engineering and governance problem, not just a legal review problem. The decisions that determine whether the institution can satisfy its obligations are made when the model is being built and approved, not after it is in production.

The following steps, taken before a collections AI model goes live, establish the foundation for compliant operation:

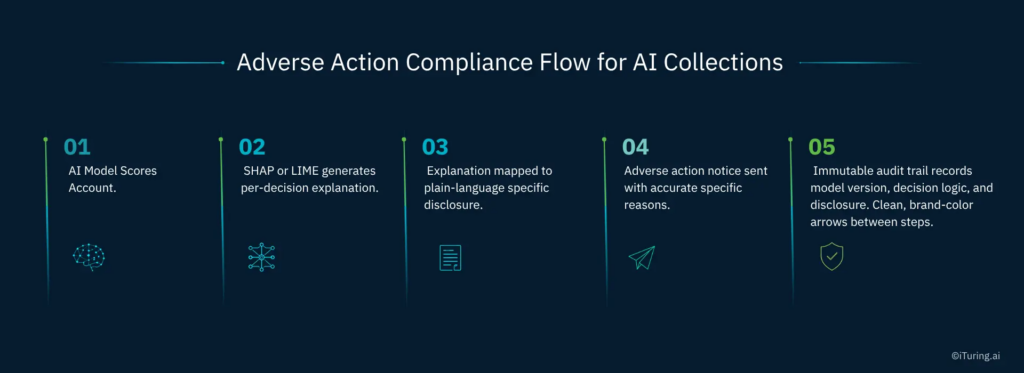

- Implement SHAP or LIME explainability at the individual prediction level so that every scored account has a decision-specific explanation, not a summary of overall model behavior

- Map every input variable, including nontraditional behavioral signals, to a plain-language adverse action reason that is accurate, specific, and pre-approved for use in consumer disclosures

- Tie adverse action notice generation into the model’s deployment workflow so that the explanation produced at decision time is the explanation used in the disclosure, with no manual translation in between

- Maintain immutable records linking each adverse action to the exact model version, the input data used, and the explanation generated at that moment

- Conduct periodic post-deployment testing of disclosure accuracy to confirm that as the model evolves, the explanations it generates continue to satisfy the specificity standard

Why the Standard Is Higher Than Many Teams Realize

The CFPB’s sample forms have been part of the Regulation B framework for decades. The official staff commentary on how to select principal reasons for adverse actions was last updated in a period when AI did not exist in consumer finance. Neither the sample forms nor the commentary was designed for models that use thousands of variables in non-linear combinations.

The CFPB has been explicit that this gap does not create flexibility for institutions. The framework has built-in latitude, specifically the requirement to accurately describe the factors the model actually considered, even if those reasons do not appear on the sample forms. That latitude is not a loophole. It is an obligation to go beyond the forms when the forms are not accurate enough.

Collections teams that treat Regulation B’s model forms as a compliance safe harbor for AI-driven decisions are operating on an assumption the CFPB has publicly and specifically rejected.

How iTuring Addresses This

The explainability and audit infrastructure required to satisfy the CFPB’s adverse action notice standard for AI collections is built into the iTuring platform as a structural property of how models are deployed, not as an optional add-on.

Every model in iTuring generates SHAP and LIME explanations at the individual prediction level. Every model version is tracked with immutable lineage records. Maker-checker approval workflows ensure that before any model goes into production, its inputs, outputs, and adverse action disclosures have been reviewed and documented. One-click exam documentation packages compile this evidence into a reviewable format that can be produced to a regulator within hours.

For collections teams building or scaling AI infrastructure in 2026, the adverse action notice obligation is one of the clearest tests of whether a platform’s governance is sufficient. Book a discovery session with iTuring to see how the platform handles this in a live environment.