TL;DR

- The PA/FSCA November 2025 joint report found AI governance frameworks across SA’s financial sector are still developing and vary widely in maturity

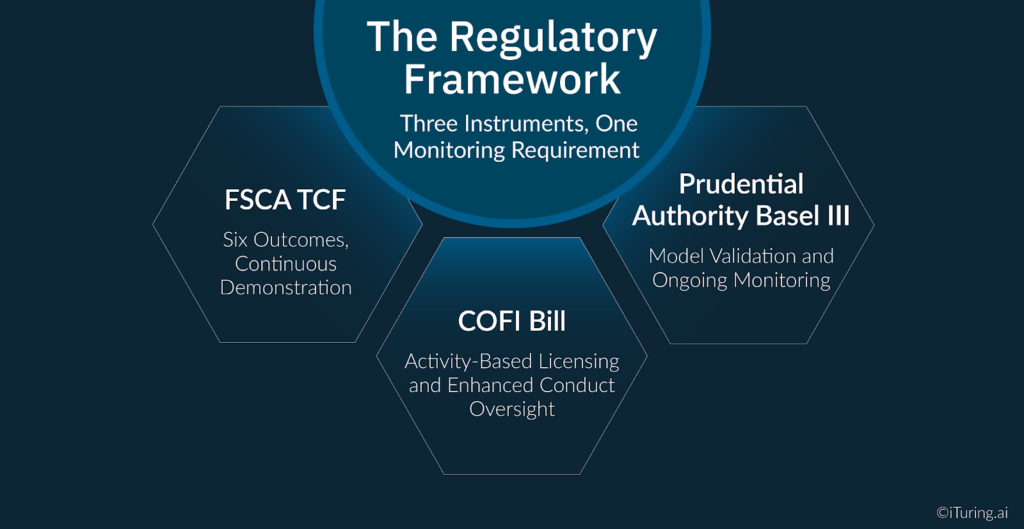

- Three regulatory instruments, FSCA TCF, Prudential Authority Basel III, and the COFI Bill, each impose monitoring obligations that overlap and reinforce one another

- POPIA Section 71(3)(b) requires explainability for automated decisions affecting consumers

- Disparate impact monitoring across race and gender is a SA-specific obligation with no equivalent in US or European collections governance frameworks

- NCA 2025 amendment proposals tighten data accuracy requirements that directly affect AI model input monitoring

In November 2025, the Prudential Authority and the FSCA published their joint report on AI adoption across South Africa’s financial sector. The finding on ai governance monitoring was precise: governance frameworks for AI are still developing across the sector and vary widely in maturity, with many institutions relying on existing risk-management structures rather than dedicated AI governance arrangements.

That finding describes the current state. The regulatory direction of travel describes what will be required.

Three separate regulatory instruments are converging on the same operational obligation for South African banks and insurers running AI collections models. The FSCA’s Treating Customers Fairly framework requires that fair treatment of customers is demonstrably delivered throughout the product lifecycle, including post-sale servicing and collections. The Prudential Authority’s Basel III standards mandate model validation, ongoing monitoring, and board-level oversight for internal models. The COFI Bill, once enacted, will require that all financial institutions holding a Conduct Licence demonstrate ongoing compliance with an enhanced market conduct regime that covers the full customer interaction lifecycle.

Each framework, examined separately, creates a monitoring obligation. Together, they create a monitoring obligation that is more comprehensive than any single framework suggests. For South African banks and insurers deploying AI collections models, the gap between a governance policy and purpose-built ai governance solutions is precisely where regulatory exposure lives in 2026.

The Regulatory Framework: Three Instruments, One Monitoring Requirement

Understanding what monitoring is required begins with understanding what each regulatory instrument demands and how the demands interact.

FSCA TCF: Six Outcomes, Continuous Demonstration. TCF is an outcomes-based regulatory approach requiring regulated institutions to demonstrate that specific fairness outcomes are delivered to customers throughout the product lifecycle. The six TCF outcomes span product design, promotion, advice, servicing, and complaints handling. For collections AI, three outcomes are directly relevant. Outcome 1 requires that fair treatment is central to firm culture, meaning the collections AI system must operate within a fair treatment architecture by design. Outcome 4 requires that advice and recommendations are suitable and based on the individual customer’s circumstances, which applies to AI-generated collections contact strategies. Outcome 6 requires that there are no post-sale barriers to switching, claiming, or making a complaint.

Critically, TCF requires demonstration. An AI collections model that produces good average outcomes across the portfolio does not satisfy TCF if the institution cannot show, to FSCA’s satisfaction, how it continuously monitors the model’s behaviour to confirm fair treatment is being delivered at the individual account level.

Prudential Authority Basel III: Model Validation and Ongoing Monitoring. South Africa implemented Basel III in alignment with the internationally agreed timeline, and the Prudential Authority’s prudential standards mandate that financial institutions assess and mitigate model risk, third-party dependencies, and systemic vulnerabilities introduced by automation and advanced analytics. The PA’s Credit Risk Roadmap (D12-2025) specifies that banks using internal models must obtain prior written approval before making model changes, and that model validation documentation must be presented to the PA.

For AI collections models, this creates a continuous obligation: the model in production must be demonstrably the same model that was validated, or any changes must have followed the PA’s documented approval process. Self-learning models that update between validation cycles require specific governance treatment to satisfy this requirement.

COFI Bill: Activity-Based Licensing and Enhanced Conduct Oversight. Once enacted, the COFI Bill will apply to virtually all financial services providers in South Africa, including banks, insurers, and credit providers. A key requirement is that all institutions engaging directly with customers must obtain a Conduct Licence from the FSCA, even if they already hold a prudential licence from the SARB. The COFI Bill’s transparency requirements, including AI-specific transparency obligations being developed in alignment with the COFI framework, demand that institutions embed a documented responsible ai framework into their AI-driven customer operations, covering how AI decisions are made, explained, and audited throughout the customer lifecycle.

The Baker McKenzie analysis of the November 2025 SARB/FSCA report identifies the forward direction precisely: institutions will have to put clear governance frameworks in place to protect data, ensure fairness, and be transparent in how AI is being used. The FSCA and PA plan to further engage stakeholders and align financial sector efforts with national AI strategies.

Why Collections AI Creates Specific Monitoring Challenges in South Africa

South Africa’s credit market characteristics create monitoring challenges that are distinct from those faced by US or European collections AI deployments. Four factors define the South African-specific monitoring environment.

The South African credit cycle and NCA debt review dynamics. South Africa has one of the world’s highest household debt-to-income ratios. The NCA’s debt review mechanism allows over-indebted consumers to restructure their obligations under debt counsellor supervision, which removes them from standard collections contact while they are under review. An AI collections model that cannot detect debt review status in real time, or that continues to contact consumers placed under debt review, is creating NCA compliance violations with each contact attempt. Monitoring the model’s NCA compliance guardrails requires a live data feed from the National Credit Regulator’s debt review registry, not a periodic batch check.

The August 2025 proposed amendments to the National Credit Regulations further tighten data accuracy obligations: credit providers must submit consumer credit information in accordance with NCR guidelines, with expanded bureau data requirements and tighter affordability assessment standards. For AI collections models that use bureau data as an input feature, these amendments require monitoring of data input quality, not just model output performance.

POPIA Section 71 and automated decision-making obligations. Section 71(1) of POPIA restricts automated decision-making where decisions have legal consequences or affect the data subject to a substantial degree. A collections contact strategy decision falls within this scope: being placed in an intensive collections contact sequence has material consequences for the consumer. Section 71(3)(b) requires that the responsible party provide the data subject with sufficient information about the underlying logic of the automated decision-making to enable them to make representations.

In practical terms, this means every AI-generated collections prioritisation decision must be explainable to the consumer on request, in clear and understandable language. Monitoring the model’s explainability capability is therefore not an internal governance nicety. It is a legal obligation that activates each time an automated collections decision is made.

Disparate impact across race and gender in the South African equity context. South Africa’s constitutional framework and transformation obligations create a monitoring requirement with no direct equivalent in US or European collections governance: the obligation to monitor AI model outputs for disparate impact across race and gender. An AI collections model that systematically produces different contact strategies, different treatment intensities, or different resolution outcomes for consumers from different racial groups is not simply generating a performance anomaly. It is generating an equity compliance exposure that intersects with the Promotion of Equality and Prevention of Unfair Discrimination Act.

The FSCA’s Regulatory Strategy 2025-2028 identifies fairness and the elimination of discriminatory outcomes as explicit supervisory priorities. Governance monitoring for AI collections models in South Africa must include ongoing disparate impact analysis as a formal, documented monitoring parameter.

Multi-agent workflow complexity. Modern collections AI platforms involve multiple interacting agents. Each agent’s individual behaviour may be compliant, but the interaction effects between agents can produce outcomes that none individually would generate. Monitoring a multi-agent collections workflow requires system-level visibility that tracks not just individual model performance but the end-to-end customer journey that the workflow produces. The FSCA and PA’s joint report specifically identifies the complexity of multi-agent AI systems as a governance challenge requiring dedicated monitoring attention.

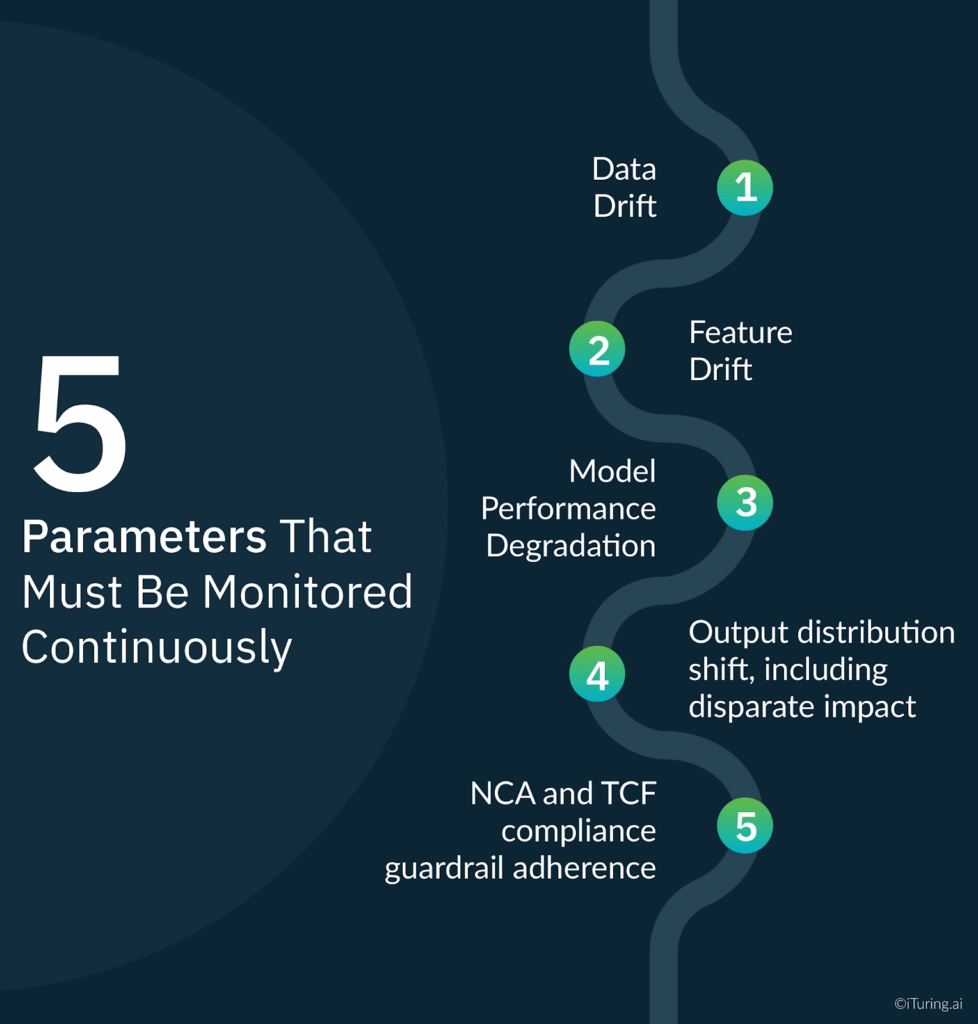

The Five Parameters That Must Be Monitored Continuously

A complete model monitoring program for an AI collections governance framework in a South African institution covers five parameters continuously, with documented thresholds and escalation procedures for each. These parameters form the operational core of any credible ai governance solution for collections: they translate the FSCA TCF, PA Basel III, and POPIA obligations into measurable, auditable, examination-ready monitoring activity.

Data drift. The statistical properties of the model’s input features change as the credit market evolves. A model trained on pre-2024 consumer behaviour data will encounter different relationships between its input features and payment outcomes as the credit cycle shifts. Data drift monitoring detects when input feature distributions move outside the range observed during model training, triggering a review of whether the model’s predictions remain reliable. For South African collections models, data drift monitoring must include bureau data quality as a specific tracked parameter given the NCA’s tightened data accuracy obligations.

Feature drift. A specific subset of data drift: when the predictive relationship between individual features and payment behaviour changes, even if the overall input distribution appears stable. A feature that was strongly predictive of cure behaviour during one economic period may lose predictive power as consumer financial conditions change. Feature drift monitoring uses SHAP value tracking over time to detect when feature importances shift materially.

Model performance degradation. Tracking the model’s prediction accuracy against actual payment outcomes on a rolling basis. For South African collections models, performance must be tracked disaggregated by NCA consumer category, by DPD bucket, and by geographic region. Aggregate performance metrics can mask deterioration in specific segments that, if left undetected, represent both credit risk exposure and TCF compliance exposure.

Output distribution shift, including disparate impact. Monitoring the distribution of collections contact strategies, treatment intensities, and resolution outcomes across the portfolio. For South African institutions, this monitoring must specifically track whether outcomes differ systematically by race, gender, or geographic proxy. Output distribution shift monitoring is the mechanism through which TCF Outcome 4 compliance is continuously verified: if the model is producing systematically different treatment recommendations for consumers with equivalent financial profiles but different demographic characteristics, the monitoring system must detect and escalate this before it becomes an FSCA examination finding.

NCA and TCF compliance guardrail adherence. Monitoring whether the model’s contact recommendations comply with NCA contact restrictions, debt review exclusions, and TCF fair treatment standards on every decisioning cycle. This parameter monitors the model’s behaviour at the guardrail level rather than at the performance level. A model can be performing accurately in a predictive sense while simultaneously generating contact recommendations that breach NCA restrictions for consumers in debt review. Guardrail adherence monitoring catches this class of failure that performance monitoring alone cannot detect.

What an FSCA Examiner Will Ask For

The FSCA’s examination approach for AI governance in financial institutions is documented in its Regulatory Strategy 2025-2028 as an emerging priority. Based on the FSCA’s published TCF examination methodology and the Prudential Authority’s model risk examination standards, an AI collections governance examination will focus on five documentation areas.

Monitoring frequency and methodology documentation. Evidence that monitoring is continuous, not periodic. For a collections model running daily decisioning, daily monitoring records must exist. An annual validation report without supporting ongoing monitoring logs does not satisfy the FSCA’s continuous demonstration requirement under TCF. Institutions that rely on manual processes rather than purpose-built ai governance software to generate these records will face significant difficulty producing the volume of documentation an examination requires on short notice.

Escalation procedures with documented activation history. Pre-defined thresholds for each monitored parameter, documented escalation paths when thresholds are breached, and records of every escalation event including the action taken and the outcome. Escalation procedures written after a breach occurs do not satisfy the governance requirement.

Retraining governance records. For every model retraining event: who authorised the retraining, what governance process was followed, what validation was conducted before the retrained model returned to production, and whether the PA’s model change notification requirements under D12-2025 were satisfied.

TCF outcome measurement evidence. Documentation demonstrating how TCF outcomes, specifically Outcomes 1, 4, and 6, are measured within the AI collections workflow, at what frequency, by whom, and with what results. This is the element most commonly absent from AI collections governance documentation in South Africa: institutions can describe their TCF policy but cannot produce the measurement evidence that FSCA examinations require.

Board risk committee reporting archive. Records of AI collections governance reporting provided to the board’s risk committee, including the content, frequency, and the committee’s recorded responses to governance findings. The FSCA’s Integrated Report 2024-25 identifies board-level oversight of AI risk as a supervisory expectation, and examination teams look for evidence that the board has genuine visibility of AI model governance status.

Champion-Challenger as an FSCA Governance Tool

Champion-challenger testing serves a specific governance function in the South African regulatory context that goes beyond its role in US model risk management. It is also one of the clearest practical manifestations of a responsible ai framework in production: the institution continuously proves its deployed model is both the most accurate and the most equitable option available, rather than simply asserting compliance.

Under TCF, regulated institutions must demonstrate that their AI collections model remains the best available approach for delivering fair customer outcomes. Running a challenger model continuously alongside the production champion provides empirical evidence that the deployed model remains both accurate and fair, relative to the available alternative. This evidence is directly responsive to FSCA’s requirement that fair treatment is demonstrably delivered, rather than simply asserted.

Under the PA’s Basel III internal model standards, champion-challenger provides the independent performance comparison that satisfies the model monitoring independence requirement: the challenger is developed and monitored by a team independent of the champion model’s owners, and the comparison data provides ongoing empirical validation of the production model’s adequacy.

For disparate impact monitoring specifically, champion-challenger enables a South African institution to demonstrate that the production model does not systematically produce worse outcomes for protected groups relative to the challenger. If the challenger consistently produces more equitable outcomes across demographic segments, this is a governance finding that must be escalated and acted on. The champion-challenger framework makes this comparison structured, documented, and examination-ready.

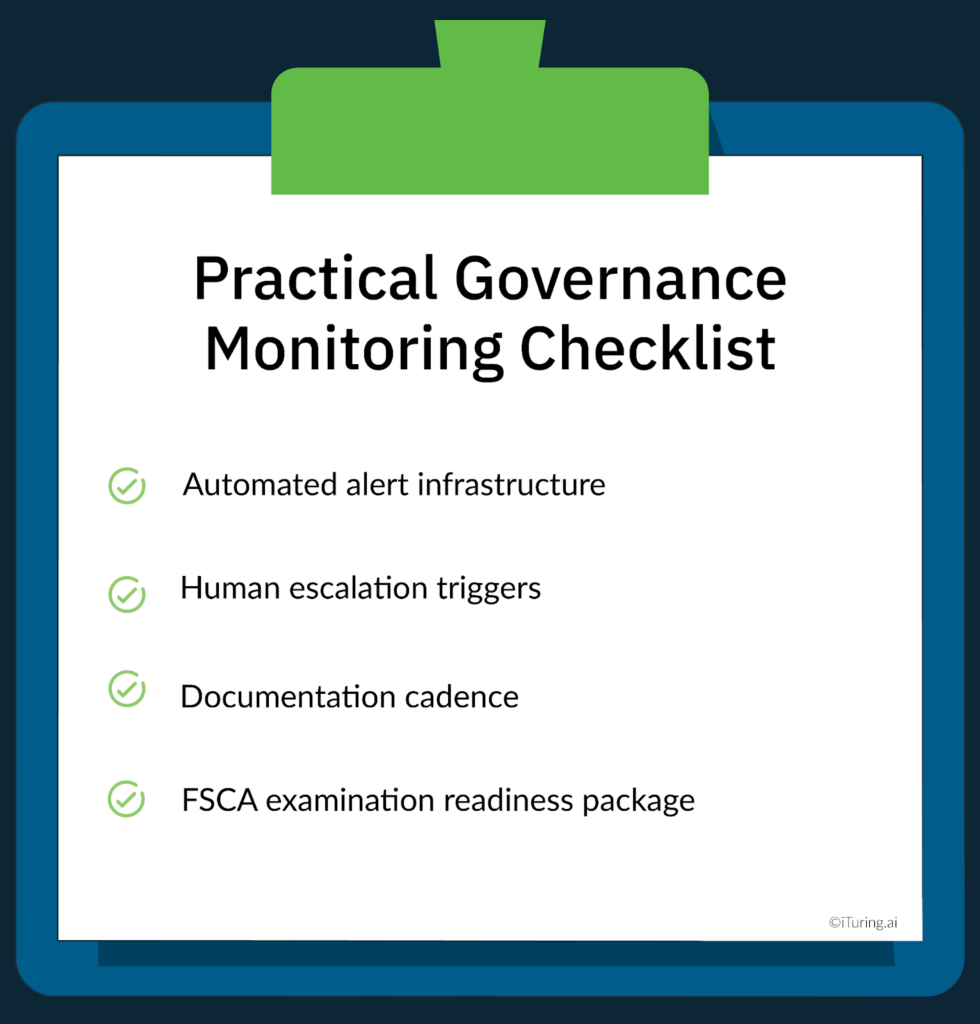

Practical Governance Monitoring Checklist

A complete, examination-ready AI collections governance monitoring program for a South African institution operates across four operational dimensions.

Automated alert infrastructure. Purpose-built ai governance software with real-time threshold breach alerts across all five monitoring parameters, routed to the model owner and the model risk function simultaneously. Alert thresholds must be set before deployment and must be documented in the model governance record. Manual monitoring processes that depend on analyst availability to detect breaches do not meet the continuous monitoring standard. Institutions attempting to manage this with spreadsheet-based tracking or periodic analyst reviews are not producing the continuous monitoring record that FSCA examinations will require.

Human escalation triggers. Defined criteria for when an alert escalates from automated notification to a governance committee decision: significant performance degradation, detected disparate impact, NCA compliance breach, or retraining event. The escalation process must include a documented timeline from breach detection to governance committee review to resolution action.

Documentation cadence. Daily monitoring logs maintained in automated format. Monthly performance summary reports produced for the model owner. Quarterly governance reviews conducted by the model risk function with formal sign-off. Annual independent validation encompassing all five monitoring parameters. Board risk committee reporting on a quarterly or semi-annual basis as per the institution’s governance framework.

FSCA examination readiness package. A continuously maintained documentation package that can be produced for an FSCA or PA examination on short notice. The package should include the model inventory entry, the most recent validation report, twelve months of monitoring logs, all escalation records, retraining governance decisions, TCF outcome measurement reports, disparate impact monitoring results, and board reporting archives.

How iTuring Addresses This

iTuring’s collections AI platform includes a governance monitoring module built specifically for the multi-regulatory environment South African banks and insurers operate in. The platform functions as an end-to-end ai governance solution: from pre-deployment bias testing and responsible ai framework documentation through to continuous post-deployment model monitoring and one-click examination readiness reporting.

Real-time monitoring across 60 parameters covers all five monitoring dimensions required by the FSCA/PA framework: data drift, feature drift, model performance degradation, output distribution shift, and compliance guardrail adherence. Disparate impact monitoring across demographic segments is built into the output distribution monitoring as a standard parameter, not an optional add-on, given the specific South African equity obligations that collections AI deployments must satisfy.

Automated drift detection provides 2 to 4 week early warnings before threshold breaches, giving model risk teams sufficient time to initiate governance processes before performance deteriorates to examination-relevant levels. Champion-challenger testing is embedded in the deployment architecture by default, producing continuous empirical comparison data that satisfies both TCF’s demonstration requirement and the PA’s independent review standard.

One-click examination readiness packages generate complete documentation covering all FSCA/PA requirements, formatted for regulatory review and including TCF outcome measurement evidence, disparate impact monitoring results, and board reporting archives.

Regulatory Disclaimer

This article is for informational purposes only and does not constitute legal or compliance advice. FSCA TCF requirements, Prudential Authority Basel III standards, POPIA obligations, NCA requirements, and COFI Bill provisions are subject to change and finalisation. The COFI Bill has not been enacted as at the publication date of this article. Information presented reflects publicly available regulatory guidance and the November 2025 SARB/FSCA joint report as of the publication date. Consult qualified South African legal and compliance professionals for guidance specific to your institution’s circumstances.

Sources: SARB/FSCA: AI in the South African Financial Sector November 2025 | Baker McKenzie: South Africa AI Adoption SARB and FSCA | Moonstone: FSCA and PA Comprehensive Study AI Use | FSCA: Treating Customers Fairly | FSCA Regulatory Strategy 2025-2028 | FSCA Integrated Report 2024-25 | Prudential Authority D12-2025 Credit Risk Roadmap | Novia One: COFI Bill Implementation | Moonstone: FSCA Aligning Work with COFI | Dvara Research: TCF Policy South Africa | Swart Law: Transparency and Automated Decision-Making POPIA | QUT Law: Automated Decision-Making POPIA Section 71 | UP Journals: Analysis of POPIA Section 71 | FairBridges: AI Governance Framework South Africa | Masthead: NCA Regulation Amendments August 2025 | Banking Association SA: National Credit Act