TL;DR

- The November 2025 SARB/FSCA joint report found that model development, validation, and ongoing monitoring are the two leading governance frameworks in SA banking, but that governance maturity varies widely across institutions

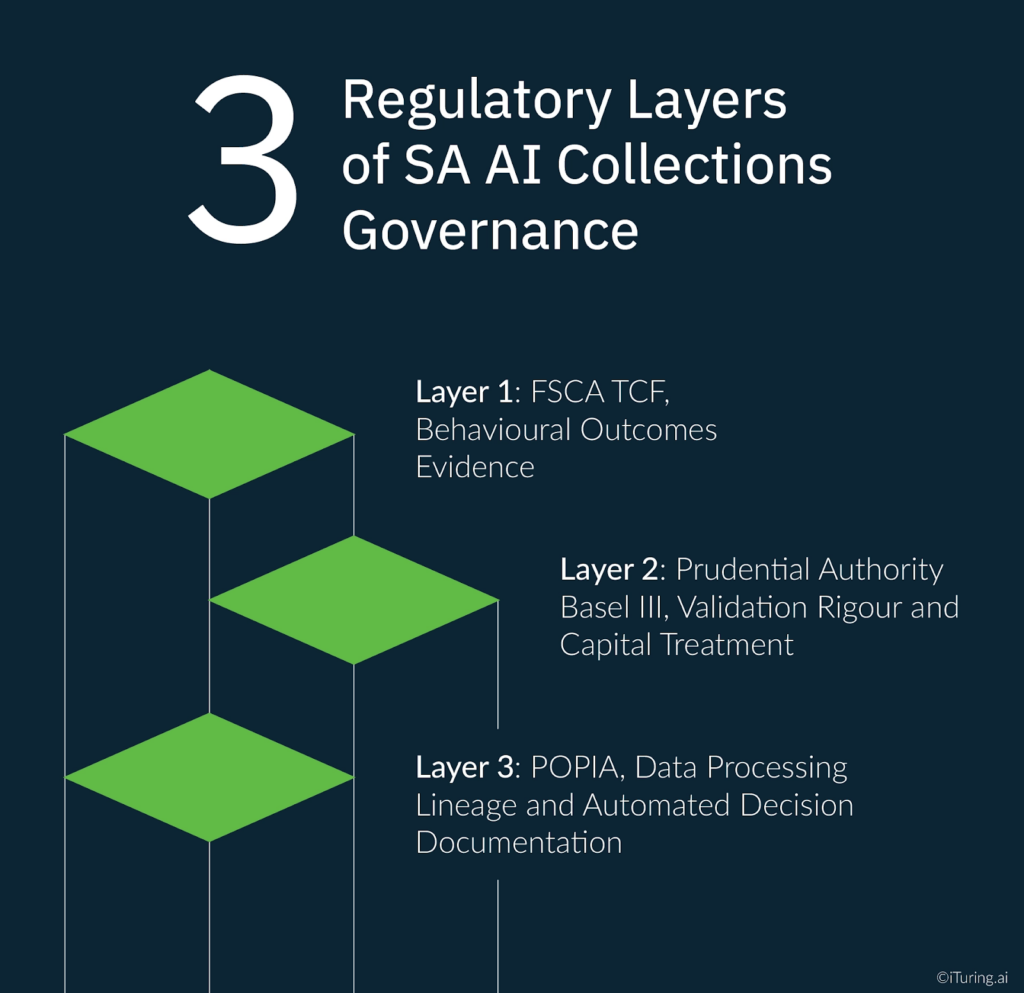

- SA collections AI governance must satisfy three separate documentation streams: FSCA TCF, Prudential Authority Basel III, and POPIA

- Vendor-hosted AI models may not appear in internal model inventories, a specific finding that FSCA examiners now look for

- Disparate impact testing across race and gender is a SA-specific validation requirement with no global precedent

- Board-level oversight of AI model governance is an explicit PA and FSCA supervisory expectation, not a governance best practice

The KPMG South Africa Ten Key Regulatory Challenges for 2025 places AI model validation and continuous monitoring at the centre of the financial services governance agenda, identifying these as the most critical technical requirements that South African banks must address for AI systems: rigorous testing and validation before deployment, continuous monitoring after deployment, and board-level oversight throughout the model lifecycle.

The challenge is that most South African banks built their model governance frameworks for traditional statistical scorecards. A logistic regression scorecard has a fixed set of features, a stable coefficient structure, and a validation methodology that has been standardised over decades. The governance infrastructure surrounding those models (the inventory entries, the validation templates, the approval workflows, the change management records) was designed for something that stays still between validation cycles.

AI collections models do not stay still. A self-learning propensity model updates its parameters as it processes new account outcomes. A multi-agent orchestration layer routes accounts through changing combinations of AI components as portfolio conditions evolve. A champion-challenger deployment continuously generates new model versions. Applying a scorecard-era governance framework to this environment produces the most common AI governance finding that PA and FSCA examiners are now encountering: governance documentation that describes a model as it was at validation but does not reflect how the model is actually operating in production.

This article covers the three regulatory layers of AI collections model governance in South Africa, the specific inventory and approval workflow failures that examination teams look for, the validation requirements that SA collections AI must satisfy beyond a standard Basel III program, and what a Prudential Authority examiner will check when they review a bank’s AI collections governance documentation.

The Three Regulatory Layers of SA AI Collections Governance

The fundamental governance challenge for South African banks and insurers running AI collections models is that three distinct regulatory frameworks impose governance obligations simultaneously, each with its own documentation standard and each held by a separate supervisory body. A properly structured enterprise ai governance program for South African collections AI must maintain all three documentation streams concurrently, formatted for three distinct supervisory audiences, covering a single deployed model.

Layer 1: FSCA TCF, Behavioural Outcomes Evidence. The FSCA’s market conduct mandate requires that institutions can demonstrate, to the FSCA’s satisfaction, that TCF outcomes are being delivered to customers throughout the product and service lifecycle. For collections AI, this creates a specific documentation obligation: the governance record must show not just that the model was validated before deployment but that its outputs are continuously monitored for fair treatment evidence. The FSCA AI report signals explicitly that AI adoption must align with TCF principles and the broader market conduct framework, and that FSPs must be able to demonstrate compliance where automated decision-making is involved.

TCF governance documentation is behavioural rather than technical. Where a PA validation report covers discriminatory power and calibration, a TCF governance record covers customer outcome evidence: whether the model’s contact strategy decisions treat customers fairly, whether customers are provided with sufficient information about automated decisions, and whether complaint patterns reflect any systematic fairness failures.

Layer 2: Prudential Authority Basel III, Validation Rigour and Capital Treatment. The PA’s prudential standards mandate that financial institutions assess and mitigate model risk, third-party dependencies, and systemic vulnerabilities introduced by automation and advanced analytics. For IRB approach banks, the D12-2025 Credit Risk Roadmap adds specific change approval requirements: prior written approval before model changes, and PA communication with validation documentation for Tier 2 recalibrations. The PA’s ongoing monitoring obligation is the technical layer of ai governance monitoring that the FSCA’s conduct documentation requirement complements at the behavioural level. Both regulators require continuous evidence of model performance; they differ in what type of evidence each considers sufficient.

PA governance documentation is technical and quantitative. Validation reports must address discriminatory power, calibration accuracy, stability, and conceptual soundness. Ongoing monitoring must be evidenced through documented performance tracking. Change management must be evidenced through approval records and version control logs. These requirements exist independently of TCF requirements, and satisfying one does not satisfy the other.

Layer 3: POPIA, Data Processing Lineage and Automated Decision Documentation. The 2025 POPIA regulation amendments, published under Government Notice No. 6126, expanded data subject rights and tightened responsibilities for information officers in relation to automated decision-making. For AI collections models, POPIA creates governance obligations at the data input level: every data source feeding the model must have a legal basis for processing documented in the institution’s records of processing activities, and every automated decision affecting a data subject must be capable of generating a Section 71(3)(b) explanation.

POPIA governance documentation is lineage-based rather than performance-based. The record must trace each data input from its source through processing to model output, confirming at each step that the legal basis for processing was valid and that the data subject’s rights under POPIA were respected.

The governance challenge is that these three documentation streams use different templates, serve different supervisory audiences, and carry different examination consequences. A single integrated AI collections governance framework must maintain all three simultaneously, in formats that are accessible to PA examiners, FSCA conduct supervisors, and the Information Regulator respectively.

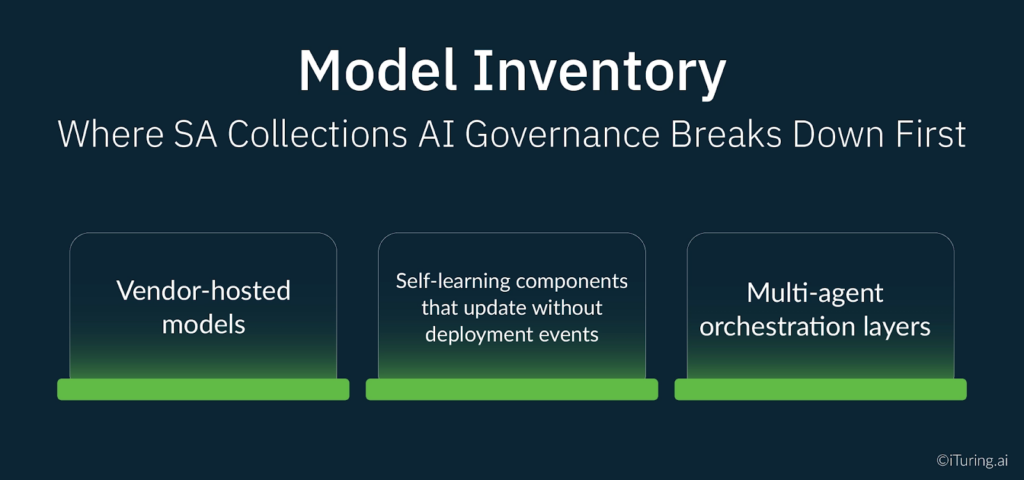

Model Inventory: Where SA Collections AI Governance Breaks Down First

The November 2025 SARB/FSCA joint report found that model development, validation, and ongoing monitoring were the two most common governance frameworks deployed by South African banking institutions. What the report also found is that governance frameworks vary widely in maturity, with many institutions relying on existing risk management structures rather than dedicated AI governance arrangements.

The most common point of breakdown is the model inventory. A model that is not in the inventory is a model that has no governance. Three characteristics of AI collections deployments cause inventory failures that do not arise with traditional scorecards.

Vendor-hosted models. When an AI collections platform is hosted by a third-party vendor, the models running on that platform may not automatically appear in the bank’s internal model inventory. The bank’s model risk function is tracking the models it owns and operates directly. The vendor’s models are producing credit and collections decisions affecting the bank’s customers, but they sit outside the internal governance perimeter. The PA’s third-party risk standards require that institutions assess and mitigate risks from third-party dependencies in their AI systems. Satisfying this requirement means the vendor’s models must appear in the bank’s internal inventory with documented oversight arrangements, regardless of where they are hosted.

Self-learning components that update without deployment events. Traditional model governance frameworks use deployment events as governance triggers: a new model is deployed, which triggers an inventory entry, a validation requirement, and a change management record. A self-learning collections AI model updates its parameters continuously as it processes new outcome data, without generating a formal deployment event.

Without governance criteria that define when a parameter update constitutes a model change requiring an inventory update, the model’s current state will diverge from its inventory entry over time. The PA examiner who compares the model documentation against the production model’s current parameters will find a gap that constitutes a governance finding even if the model itself is performing well.

Multi-agent orchestration layers. A modern collections AI system may involve a propensity scoring model, a contact strategy optimisation model, a channel selection model, a natural language generation model for WhatsApp message personalisation, and an orchestration layer that coordinates them. Each component is a model in the PA’s sense of the term. The orchestration layer, which makes the decisions about how the component models interact, is also a model. A governance framework that inventories the component models but not the orchestration layer is incomplete.

Approval Workflows Under South African Governance Requirements

Model approval workflows for South African AI collections governance must satisfy the PA’s change management requirements under D12-2025 while also capturing the TCF-relevant change events that the PA’s framework does not cover.

Defining material model changes for collections AI. The PA’s D12-2025 framework distinguishes between Tier 1 changes requiring prior written approval and Tier 2 recalibrations requiring communication with validation documentation. Applying this framework to a self-learning collections model requires the institution to pre-define the threshold at which a parameter update constitutes a Tier 1 change rather than a Tier 2 recalibration. This definition must be documented in the model governance framework before deployment, not determined case by case when an update occurs.

For collections AI, the working criteria that most SA model risk teams are now adopting treat the following as Tier 1 changes requiring prior PA approval: changes to the model’s fundamental methodology, the addition or removal of feature groups, changes to the model boundary that alter which accounts are in scope, and any change that materially affects the model’s treatment of accounts in any demographic segment. Parameter recalibrations within established performance bounds, using the same methodology and feature set, are treated as Tier 2 events with validation documentation and PA notification.

Maker-checker requirements for collections AI changes. Maker-checker controls for model changes require that the person or team authorising a model update is independent of the person or team who developed and proposed the update. For AI collections models, the maker-checker principle extends to retraining governance: the model risk function, independent of the collections platform team, must review and approve each retraining event before the retrained model returns to production. The retraining approval record must document the business reason for retraining, the validation evidence reviewed, and the approval sign-off by the independent reviewer.

TCF change events that PA workflows do not capture. Beyond the PA’s technical change management framework, TCF governance requires that certain business and operational changes to the collections model are also documented and reviewed, even when they do not constitute a model change in the PA’s sense. Changes to the customer segments the model is deployed against, changes to the contact strategy policy rules built on top of the model’s output, and changes to the information provided to customers about automated decisions all require TCF governance review. These events would not trigger a PA change management process but would constitute TCF governance events requiring documentation and review by the conduct compliance function.

Validation Requirements Specific to SA Collections AI

A standard Basel III model validation program for a collections propensity model covers discriminatory power, calibration, stability, and conceptual soundness. South African AI collections governance requires two additional validation components that no global governance framework standard mandates.

Disparate impact testing across South Africa’s constitutionally protected characteristics. South African banks are subject to equity obligations under the Constitution and the Promotion of Equality and Prevention of Unfair Discrimination Act that require AI collections models to be tested for disparate impact across race, gender, and disability. The model validation program must document the protected characteristics tested, the methodology used to detect disparate impact, the threshold at which detected disparate impact constitutes a finding, and the remediation process when a finding is confirmed. This validation component has no direct equivalent in US SR 11-7, European EBA guidelines, or global Basel Committee standards. It is a South Africa-specific requirement arising from the country’s constitutional framework that must be built into the validation program from the outset.

Stability and model drift detection. The standard Basel III stability requirement uses Population Stability Index analysis to determine whether the distribution of model scores has shifted from the training population. For AI collections models in the South African context, model drift detection through PSI analysis must be operationalised as a continuous monitoring process, not a periodic validation exercise. A PSI breach that goes undetected for weeks means the model has been making decisions on a population materially different from its training distribution, without governance intervention. The PA expects continuous model drift detection to be evidenced in the ongoing monitoring record, not just in annual validation reports.

TCF fair treatment validation evidence. Beyond the quantitative performance metrics, the validation report for an SA collections model must include evidence that the model’s outputs deliver fair treatment to customers in TCF terms. This means the validation program must review customer outcome data alongside model performance data: whether customers treated differently by the model experienced outcomes consistent with TCF’s six outcomes framework, and whether any systematic differences in treatment across customer groups can be justified by legitimate risk differentiation rather than model bias. PA examiners reviewing an AI collections governance file will expect this evidence to be present in the validation documentation. Its absence is a conduct governance gap even if the technical validation is complete.

What a Prudential Authority Examiner Will Check

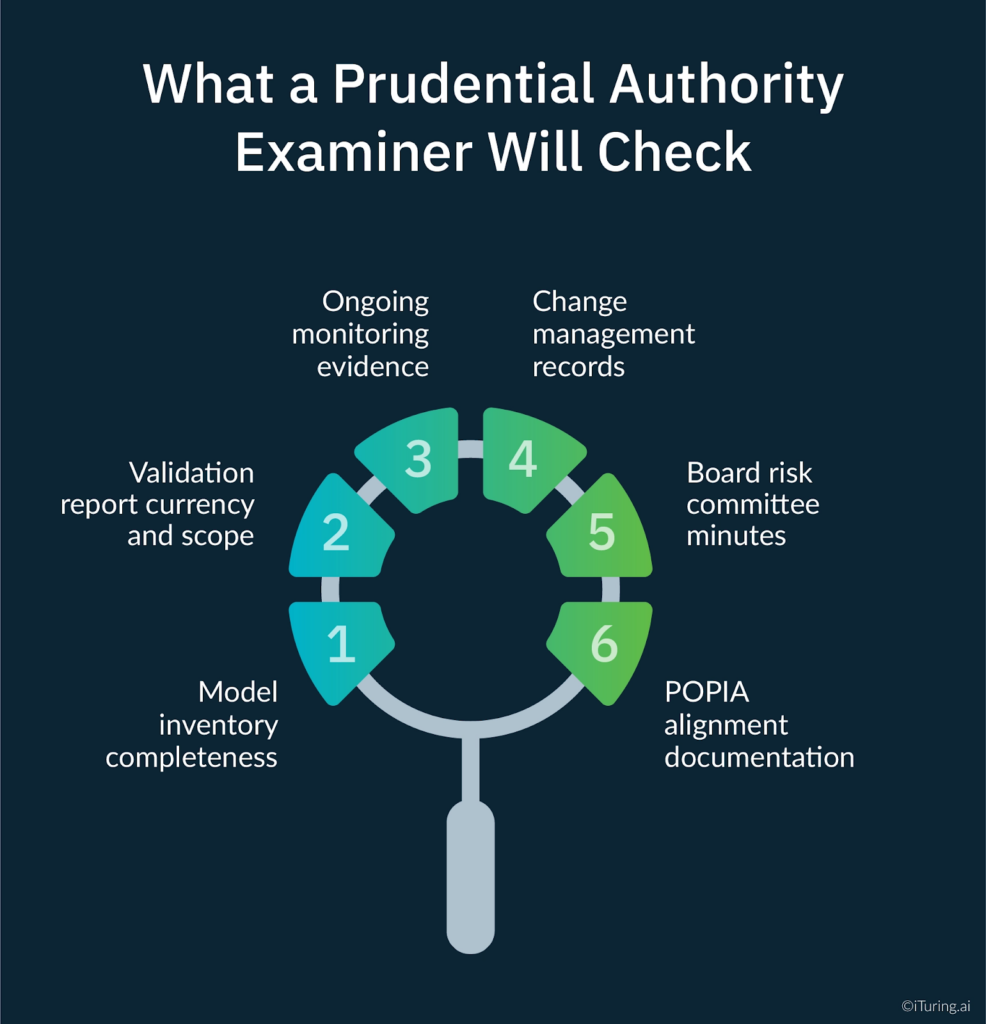

The PA’s examination approach for AI model governance, as signalled in the SARB/FSCA joint report and the KPMG Ten Key Regulatory Challenges analysis, focuses on six documentation areas for AI collections models.

Model inventory completeness. Is the AI collections model, including all component models and the orchestration layer, fully inventoried? Are vendor-hosted models included? Does the inventory entry reflect the current production version of the model, or the version as at last formal validation?

Validation report currency and scope. When was the model last validated? Does the model validation report cover all four standard Basel III dimensions plus the SA-specific disparate impact and TCF components? Was the validation conducted by a team independent of the model development function?

Ongoing monitoring evidence. The ongoing monitoring evidence requirement reflects what a complete ai governance monitoring program produces: a continuous performance record showing tracking against documented alert thresholds, covering the full period since the last validation, with documented investigation and resolution records for every threshold breach. An annual validation report presented without supporting monitoring logs does not satisfy this requirement.

Change management records. Is there a complete record of every model change since deployment, classified by tier, with approval documentation for Tier 1 changes and PA communication records for Tier 2 events? Are retraining governance records present for every retraining event?

Board risk committee minutes. Do board risk committee minutes reference AI model governance reporting? Does the board have documented visibility of the model’s performance, any monitoring findings, and the governance actions taken in response? The Masthead analysis of the FSCA/PA joint report identifies board-level oversight as an explicit supervisory expectation, and examination teams look for evidence that the board has genuine, documented visibility rather than nominal awareness.

POPIA alignment documentation. Is there a records of processing activities entry covering the AI collections model? Does the entry document the legal basis for each data source used as a model input? Are Section 71(3)(b) explanation records maintained for automated decisions made on borrower accounts?

How iTuring Addresses This

iTuring’s model governance module is designed for the three-layer regulatory environment that South African banks operate in under Twin Peaks supervision. The platform delivers enterprise ai governance coverage across all three regulatory frameworks from a single integrated system: PA technical validation and change management, FSCA TCF behavioural outcome documentation, and POPIA data lineage records maintained concurrently without requiring the institution to run parallel governance processes for each regulator.

The model inventory component automatically captures all deployed model versions, including vendor-hosted components, with a continuous version control record that reflects the production model’s current state rather than its validation-date state. Self-learning parameter updates are logged against the governance framework’s pre-defined change thresholds, with automatic escalation to the change management workflow when Tier 1 criteria are met.

Model validation support covers all four standard Basel III dimensions plus the two SA-specific requirements: disparate impact testing across race, gender, and other constitutionally protected characteristics using the Fairlearn framework, and TCF fair treatment outcome evidence generated from customer interaction data alongside quantitative model performance metrics. Continuous model drift detection through automated PSI analysis generates early warning alerts 2 to 4 weeks before threshold breaches, giving the model risk function time to initiate governance action before performance deteriorates to examination-relevant levels.

Maker-checker workflows for retraining approval are built into the platform architecture. The model risk function receives automated notification of every retraining event with a pre-populated governance review pack, including the performance comparison between the current production model and the proposed retrained version, before approval is required. Board reporting packs covering model governance status, monitoring findings, and change management activity are generated automatically on a configurable cadence.

One-click examination readiness packages compile all PA, FSCA, and POPIA governance documentation in examination-ready format, covering the six areas PA examiners assess and formatted for each of the three regulatory audiences separately.

Regulatory Disclaimer

This article is for informational purposes only and does not constitute legal or compliance advice. Prudential Authority D12-2025 requirements, FSCA TCF obligations, POPIA regulations, and related regulatory frameworks are subject to change and ongoing supervisory development. The 2025 POPIA Regulation amendments and the COFI Bill provisions referenced in this article are subject to finalisation and further regulatory guidance. Consult qualified South African legal and compliance professionals for guidance specific to your institution.

Sources: KPMG: Ten Key Regulatory Challenges South Africa 2025 | SARB/FSCA: AI in SA Financial Sector 2025 Full Report | SARB/FSCA: AI Governance Frameworks SA Banks | Moonstone: FSCA and PA AI Study 2025 | Masthead: AI in SA Financial Sector FSPs 2026 | Prudential Authority D12-2025 Credit Risk Roadmap | Baker McKenzie: 2025 POPIA Amendments | IT Law: 2025 POPIA Regulation Amendments | FSCA Regulatory Strategy 2025-2028 | FairBridges: AI Governance Framework South Africa USA | SARB Prudential Regulation Report