TL;DR

- SARB implemented Basel III in January 2013; Prudential Authority issued a Credit Risk Roadmap directive in October 2025

- IRB banks must obtain prior written approval from the PA before making any changes to IRB credit risk models

- Three validation pillars: conceptual soundness, ongoing monitoring, outcomes analysis

- AI models require continuous revalidation; traditional quarterly monitoring is insufficient

- SARB/PA and FSCA published a joint AI in financial sector report in November 2025, signalling active regulatory attention to AI governance

On 24 November 2025, the Prudential Authority of the South African Reserve Bank and the Financial Sector Conduct Authority jointly published a report on artificial intelligence in South Africa’s financial sector. The report drew on analysis conducted throughout 2024 and set out, for the first time in a formal joint publication, the regulators’ view of how AI adoption creates opportunities and risks for banks, insurers, and fintechs operating under their supervision. On model governance, the message was clear: financial institutions must ensure that testing and validation of AI models is performed rigorously, and that the use of AI is done in a manner consistent with existing regulatory obligations including POPIA and prudential capital requirements.

For model risk management executives at South African Tier 1 banks, this is an existing obligation the regulatory spotlight is now illuminating more directly. South Africa implemented Basel III from January 2013, consistent with internationally agreed timelines, and the Prudential Authority’s model validation requirements under Basel III Pillar 2 have applied to Internal Ratings-Based (IRB) credit risk scoring models since then. What has changed is the model landscape. The model validation and model risk management frameworks most South African banks built for traditional scorecards were not designed for AI models that self-update, resist simple interpretation, and require fundamentally different techniques to validate appropriately.

This post maps exactly what the Prudential Authority requires for model validation under Basel III, where AI model validation diverges from traditional scorecard validation, and what a PA-compliant AI model validation programme looks like in practice for collections and credit risk scoring models.

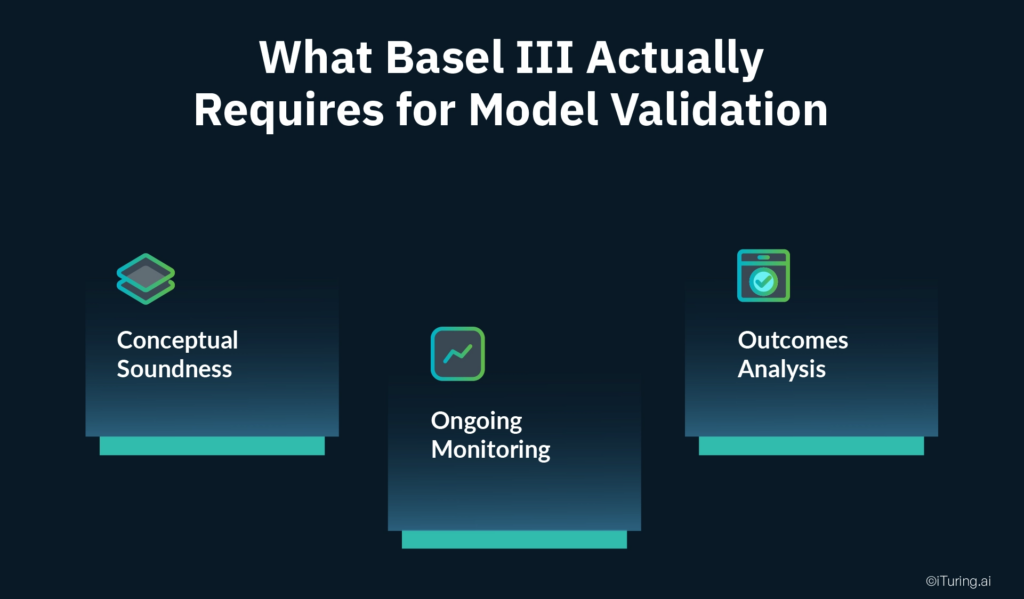

What Basel III Actually Requires for Model Validation

The Basel III framework structures model validation requirements across three core pillars. A South African Journal of Economic and Management Sciences study proposing a model risk framework for ML credit models confirms this as the standard framework applied under Prudential Authority expectations:

- Conceptual Soundness: Validation of the model’s theoretical underpinnings: is the modelling approach appropriate for the risk it is measuring? Is the choice of algorithm justified? Is the feature engineering rationale sound? Does the training data adequately represent the population the model will score?

- Ongoing Monitoring: Continuous assessment of model performance in production: is the model continuing to rank order risk correctly? Are predicted probabilities remaining calibrated against actual outcomes? Is the model stable across different time periods and borrower populations?

- Outcomes Analysis: Back-testing of predictions against realised outcomes: did borrowers the model predicted would default actually default? Did borrowers the model predicted would pay actually pay? Are there segments where predictions are consistently biased in one direction?

For IRB credit risk scoring models specifically, the Prudential Authority’s October 2025 D12-2025 Credit Risk Roadmap directive reinforces a fundamental requirement: banks using the IRB approach must obtain prior written approval from the PA before making any model changes to IRB credit risk models. This requirement applies to material retraining cycles as well as structural rebuilds, because each material retraining constitutes a model change under the PA framework, with profound implications for AI models that retrain continuously.

The model governance framework these requirements demand is substantial. PA guidelines issued in July 2025 covering IRB credit risk exposure build on the July 2022 guidelines on credit risk model governance, progressively tightening the documentation and process requirements that IRB banks must demonstrate at each examination.

Why AI Model Validation Is Fundamentally Different

A traditional credit scorecard is static. It is built at a point in time on a historical dataset, its coefficients are fixed at development, and its logic is transparent: a borrower with characteristic A scores X points, a borrower with characteristic B scores Y points, and the total score determines the risk band. Validating it is a defined, periodic exercise: check its discriminatory power, test its calibration, assess its stability, document the results, and schedule the next validation in 12 months.

AI collections and credit risk scoring models differ across three critical dimensions that require fundamentally different model validation and model risk management approaches.

Dynamic nature. A machine learning model deployed on production data is not the same model three months later if it has been retrained. Coefficients, or their equivalent in a neural network’s weights, change with each training cycle. The model the PA examined during the last validation is no longer the model making decisions today if retraining has occurred without equivalent revalidation.

Opacity. An XGBoost model with 500 trees and 150 features does not have a scorecard. Understanding why a specific prediction was produced requires post-hoc explanation techniques, including SHAP values, LIME, and partial dependence plots, rather than a direct reading of model coefficients. A validation team that lacks these capabilities cannot conduct conceptual soundness validation for an AI model.

Susceptibility to drift. A model trained on data from 2022 and 2023 was trained on a specific economic environment, specific interest rate levels, specific employment patterns, and specific borrower behaviour. As the macro environment evolves, the statistical relationships in the training data may no longer hold in the production population. As research on AI credit evaluation models confirms, models are vulnerable to population drift, and sustained accuracy depends on model drift detection monitoring and dynamic retraining frameworks, not static validation cycles. Model risk management programmes that do not incorporate continuous model drift detection are, by design, blind to this degradation between validation intervals.

| Dimension | Traditional Scorecard | AI Collections / Credit Risk Scoring Model |

| Model structure | Fixed coefficients, transparent | Dynamic weights, requires post-hoc explanation |

| Validation frequency | Annual or biennial | Continuous monitoring, revalidation on material change |

| Conceptual soundness | Coefficient review | Algorithm choice justification, feature engineering rationale |

| Discriminatory power | Gini coefficient (static) | Gini tracked continuously with drift alerts |

| Explainability | Direct scorecard reading | SHAP values, LIME, partial dependence plots |

| PA change approval | On major rebuild | On each material retraining cycle |

Conceptual Soundness for AI Collections Models

Conceptual soundness validation asks whether the model is theoretically appropriate for its stated purpose. For an AI collections propensity model, this requires answering four questions that a Prudential Authority examiner or independent validation team will ask.

Why this algorithm? The choice of algorithm, whether gradient boosting, random forest, neural network, or logistic regression, must be justified against the modelling objective, data characteristics, and interpretability requirements. Choosing an XGBoost model because it produces the highest Gini on the training set is an optimisation decision, not a conceptual soundness justification. Choosing it because it handles the non-linear relationships in collections behaviour data better than logistic regression, has established feature importance techniques that support PA explainability requirements, and has been validated for stability under the available data volume is a documented rationale that satisfies the PA’s model governance requirements.

Why these features? A model trained on 150 features requires a documented feature engineering rationale. The PA’s credit risk model guidelines require that model inputs are conceptually sound: each feature used in an IRB or collections risk model must have a documented theoretical relationship with the risk being modelled. Feature selection based purely on correlation with the training target, without a conceptual rationale, creates conceptual soundness exposure during model validation review.

Is the training data representative? A collections propensity model trained on data from a single economic cycle may not represent the full credit cycle. The Prudential Authority expects that training data covers sufficient historical periods to include both benign and stressed economic conditions. A model trained only on post-pandemic data may not have learned the behavioural patterns associated with an economic downturn.

Are the hyperparameters justified? The hyperparameter tuning approach, covering tree depth, learning rate, and regularisation parameters, must be documented and justified. Hyperparameters that overfit to the training data will produce a model that performs well in validation but degrades in production, compounding the model drift detection challenge.

Ongoing Monitoring: The Continuous Validation Requirement

The most operationally significant departure from traditional scorecard validation is the frequency obligation for AI models.

Traditional credit risk scoring models are monitored quarterly and rebuilt every two to three years. The monitoring cycle reflects the model’s static nature: a scorecard that was well-built does not deteriorate quickly, and quarterly checks are sufficient to catch meaningful performance changes.

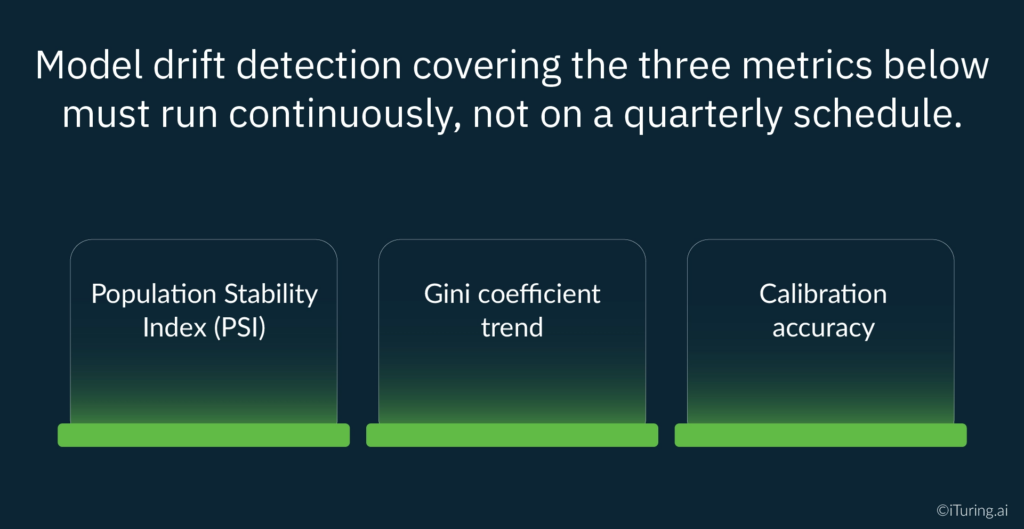

AI collections models require continuous monitoring because drift can materialise within weeks of a macroeconomic shift. Model drift detection covering the three metrics below must run continuously, not on a quarterly schedule.

Population Stability Index (PSI). PSI measures whether the distribution of borrowers being scored in production is consistent with the distribution in the training data. A PSI above 0.25 signals a significant population shift that likely requires retraining and, under the PA’s prior approval requirement for IRB model changes, a retraining triggered by a PSI breach is a model change that must be submitted for approval before deployment.

Gini coefficient trend. For collections propensity and credit risk scoring models, a Gini coefficient above 0.40 is considered acceptable for rank-ordering discriminatory power. IRB validation literature establishes that regular monitoring of discriminatory power is necessary for IRB compliance. A Gini that was 0.52 at deployment and has drifted to 0.38 in production is a model no longer performing at the level it was validated against, and that drift must be detected through systematic model drift detection, documented, and acted upon before the next PA examination.

Calibration accuracy. Calibration measures whether the model’s predicted probabilities correspond to actual outcomes. A model that predicts a 30% probability of payment should see approximately 30% of those accounts actually paying. Calibration drift, where predicted probabilities systematically diverge from realised outcomes, means the model is producing risk estimates that no longer reflect reality. For collections operations, a model that systematically overestimates payment probability will cause accounts to receive lower-priority treatment than their actual risk warrants, directly affecting recovery rates.

Outcomes Analysis: Back-Testing AI Predictions

Outcomes analysis is where the model’s predictions are tested against what actually happened. The Basel III framework requires that this testing be conducted systematically and documented for PA examination.

For AI collections and credit risk scoring models, outcomes analysis has three components:

Out-of-time testing. The model is evaluated on data from a time period after the training cutoff. The standard approach, training on 18 months of historical data and holding out the next 6 months for testing, ensures the model is evaluated on data it has not seen, under conditions that at least partially differ from the training period. For collections models, out-of-time testing should be designed to test the model across different DPD stages, different product types, and different economic quarters.

Discrimination testing. Using the Gini coefficient as the primary metric, the model’s ability to correctly rank-order risk is assessed: can it distinguish high-risk from low-risk accounts consistently? For a collections propensity model targeting accounts most likely to respond to contact, a Gini below 0.35 in out-of-time testing suggests the model lacks sufficient discriminatory power for its intended use.

Segment-level analysis. Outcomes analysis must not only assess overall model performance but also detect segments where the model systematically under- or over-predicts risk. Segment-level analysis is essential for detecting disparate impact: whether the model produces materially different error rates across borrower populations. For PA and FSCA compliance, a model that performs well overall but systematically mis-scores a specific demographic segment has a conceptual soundness problem that aggregate Gini cannot surface.

The Independent Validation Requirement

Basel III’s independence requirement for model validation is unambiguous. Under IRB requirements, validation must be performed by a function independent from the model development team. The validation team must be able to challenge methodology choices, reproduce the model development process, validate the code, test on holdout data, and assess the model’s limitations and weaknesses without the development team’s involvement in those assessments. This independence requirement is a core pillar of any PA-compliant model governance framework.

For AI models, the independence requirement creates a capability challenge that model risk management functions must address directly. Traditional scorecard validation teams are staffed for logistic regression and scorecard arithmetic. Validating an XGBoost model requires data scientists who can reproduce gradient boosting development, apply SHAP analysis, assess hyperparameter tuning decisions, and evaluate code quality. Many South African banks’ independent validation functions have not yet built this capability in-house.

The November 2025 SARB/PA and FSCA joint report acknowledged this explicitly: financial institutions are calling for principle-based regulation focused on outcomes and fairness, and strong collaboration between regulators and the financial sector will be required to build the governance infrastructure that AI adoption in financial services demands. The PA’s response has been to increase supervisory attention and guidance through the D12-2025 roadmap and the July 2025 IRB guidelines, while signalling that model governance, independence, and rigour requirements will not be relaxed to accommodate the capability gap.

How iTuring Addresses This

iTuring’s model risk management platform is built for Prudential Authority compliance in the AI model context, with model validation, model governance, and model drift detection as integrated platform capabilities. Every model deployed on the platform generates continuous monitoring outputs, including PSI tracking, Gini coefficient trend, and calibration accuracy, in a format that satisfies ongoing monitoring documentation requirements at the frequency AI credit risk scoring models demand. iTuring monitors 1,000+ models and completes comprehensive model drift detection investigations in 30 minutes compared to three weeks with traditional manual methods.

Conceptual soundness documentation is produced at model development and updated at each material retraining cycle, covering algorithm justification, feature engineering rationale, training data representativeness assessment, and hyperparameter documentation. Outcomes analysis reports are generated automatically at defined intervals, producing out-of-time testing results, segment-level analysis, and discrimination metrics in the format the PA’s examination teams expect.

The model governance framework records every validation outcome, model version, and change approval against the model inventory, providing the prior approval submission package for PA model change notifications directly from the platform’s documentation outputs. For banks whose independent validation functions need to build AI model validation capability, iTuring provides a structured independent validation support programme: full model reproduction, SHAP-based explainability analysis, code review, and a validation report that meets the three-pillar framework requirements.

For model risk management executives assessing their current AI validation framework against the PA’s requirements, iTuring offers a model governance gap assessment that benchmarks your existing validation programme against the D12-2025 roadmap and the July 2025 IRB guidelines.

Regulatory Disclaimer

The information in this blog is provided for general informational purposes only and does not constitute legal, compliance, or regulatory advice. Model validation requirements for South African banks are governed by the Banks Act 94 of 1990, the Regulations relating to Banks, the Prudential Authority’s Directives including D12-2025 (Credit Risk Roadmap) and D10-2025 (Pillar 3 Disclosure), and guidance issued by the Prudential Authority including the July 2022 and July 2025 guidelines on credit risk models under the IRB approach. The SARB/PA and FSCA joint report on AI in the South African financial sector (November 2025) provides additional supervisory context. Basel III requirements are set by the Basel Committee on Banking Supervision and implemented in South Africa through the Regulations relating to Banks. South African banks should consult qualified legal and regulatory counsel before making changes to credit risk or collections models subject to PA supervision. iTuring’s stated capabilities and performance metrics are based on platform design and client implementations; results may vary depending on institutional configuration and data environment.