TL;DR

- POPIA Section 71 restricts automated decisions affecting data subjects without human review

- Banks must provide “sufficient information about underlying logic” to challenged customers

- POPIA’s eight processing conditions all apply to AI collections data workflows

- Consent is not a valid legal basis for collections; legitimate interest or legal obligation is

- FSCA TCF Outcome 6 prohibits AI systems that create barriers to delinquency resolution

A customer receives an automated SMS telling them their account has been flagged for collections. They do not recognise the amount. They want to know how the decision was made. They ask for a human to review the case.

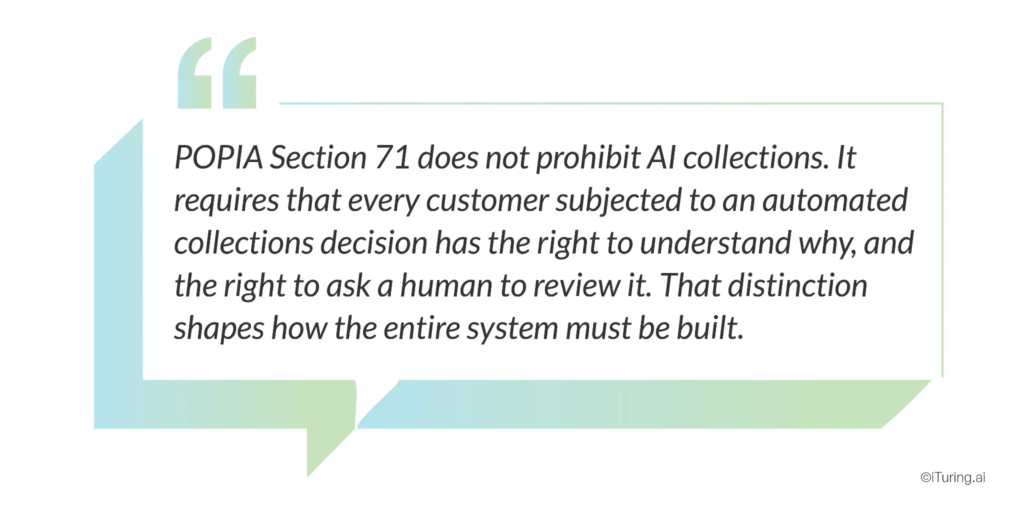

Under South African law, that request is a legal right, not a customer service preference. Generative ai compliance for South African collections means designing every scoring engine, every generative explanation, and every automated message so that POPIA’s data rights and the FSCA’s conduct standards remain intact at the level of each individual decision.

Section 71 of the Protection of Personal Information Act (POPIA) gives every data subject the right not to be subjected to a decision that results in significant legal or personal consequences if that decision was made solely on the basis of automated processing. For AI collections systems, this sits at the centre of every propensity model, every account prioritization decision, and every automated contact trigger the system generates, including any generative AI layer that drafts or explains those decisions.

POPIA came into full enforcement in July 2021. Most South African banks understood its implications for marketing, data storage, and breach notification relatively quickly. Fewer understood what it means for AI-driven collections specifically. That gap is narrowing as the Information Regulator’s enforcement posture matures, and as banks discover, sometimes through enforcement inquiries, that deploying collections AI without a POPIA-aligned responsible ai framework is a meaningful regulatory exposure.

This post covers exactly what POPIA requires for AI collections, what the FSCA’s TCF framework adds on top, and how to build an AI governance monitoring and model governance architecture that satisfies both.

Section 71: Automated Decision-Making in Collections

POPIA Section 71(1) states that a data subject may not be subject to a decision which results in legal consequences or which affects them to a substantial degree, if that decision is based solely on automated processing of personal information intended to profile that person, including their creditworthiness, reliability, location, health, personal preferences, or conduct.

For AI collections systems, this provision is directly applicable. A propensity model that scores an account and triggers escalation to legal collections, a contact strategy model that decides to exclude an account from payment plan offers, or a prioritization engine that pushes an account to the top of an enforcement queue, all of these are automated decisions that affect the data subject to a substantial degree.

There are two exceptions under Section 71(2) that permit automated decisions even without individual human review:

- The decision is required or authorised by applicable legislation or a code of conduct specifying appropriate protective measures.

- The decision has been taken in connection with the conclusion or performance of a contract with the data subject, and appropriate protective measures are in place.

For collections operations, the second exception is the relevant one, because collections activity is directly connected to the performance of a loan or credit contract. But that exception does not remove all obligations. Section 71(3) requires that appropriate protective measures must be in place, and specifically that:

- The data subject must have the opportunity to make representations about the automated decision.

- The responsible party must provide the data subject with sufficient information about the underlying logic of the automated processing to enable them to make those representations.

That phrase, “sufficient information about the underlying logic”, is where the operational challenge sits. Legal analysis from Swart Law confirms that this requires clear and understandable information about what drove the decision. It does not require disclosure of the actual AI code or model architecture, but it does require an explanation that a non-technical customer can understand: why this account received this score, which factors drove the decision, and what the predicted consequence is.

In a collections context, this translates to a specific operational requirement: every AI-driven collections decision must be explainable at the individual account level, in plain language, and delivered to the customer on request.

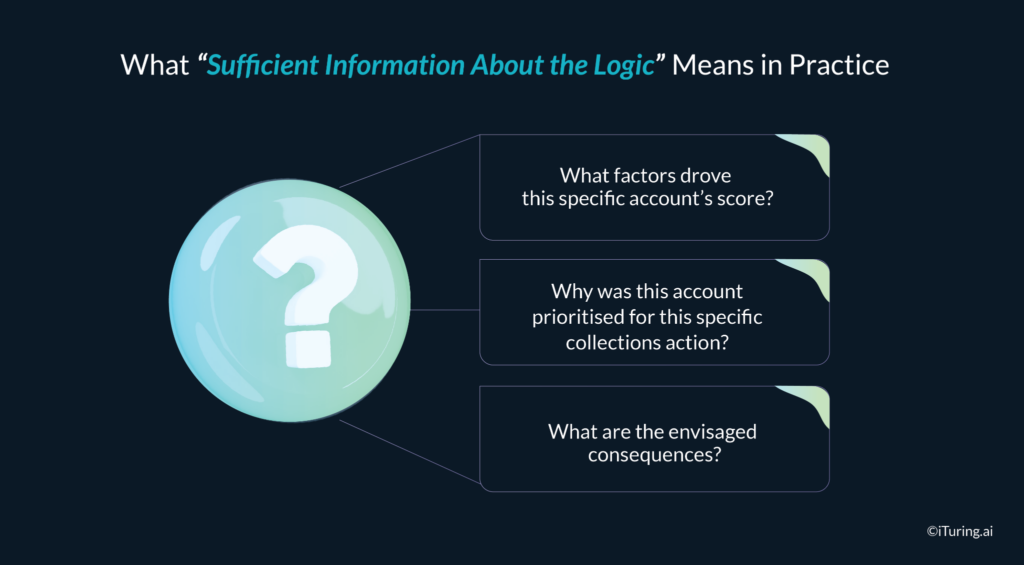

What “Sufficient Information About the Logic” Means in Practice

This is the clause that most compliance teams underestimate. It sounds manageable: explain the decision. In practice, for AI and generative AI collections systems, it raises three questions that require deliberate technical and operational design to answer.

What factors drove this specific account’s score?

A well-designed collections AI system using SHAP (SHapley Additive exPlanations) can generate a feature importance summary for any individual prediction: this account received a high risk score primarily because of 73 days without a login, a 28% increase in credit utilization in the past 60 days, and a pattern of minimum-only payments in the prior three months. That is a plain-language explanation a customer can understand and engage with. A generative ai compliance pattern then uses a governed LLM to transform those technical drivers into customer-friendly wording without altering the underlying meaning.

Why was this account prioritised for this specific collections action?

Prioritization decisions compound the explainability requirement. Telling a customer their propensity score is one thing. Telling them why that score triggered escalation to legal collections rather than a payment plan offer requires that the escalation logic itself be documented and explainable. Generative explanations must be grounded in those documented rules, not invented by the model.

What are the envisaged consequences?

Under best-practice GDPR Article 22 interpretation, which South African courts and the Information Regulator increasingly reference as interpretive guidance, “sufficient information” should include what the decision means for the data subject, not just why it was made. For collections, this means explaining that the automated decision has triggered a specific collections action, what that action involves, and what the customer can do to resolve the situation.

The right to make representations must be operationalized, not just documented. A policy statement that customers can request human review is not sufficient if there is no workflow that routes such requests to a qualified human reviewer, records the review outcome, and provides a written response to the customer. That end-to-end workflow is the operational heart of generative ai compliance in collections and must be in place before any AI or GenAI system goes into production.

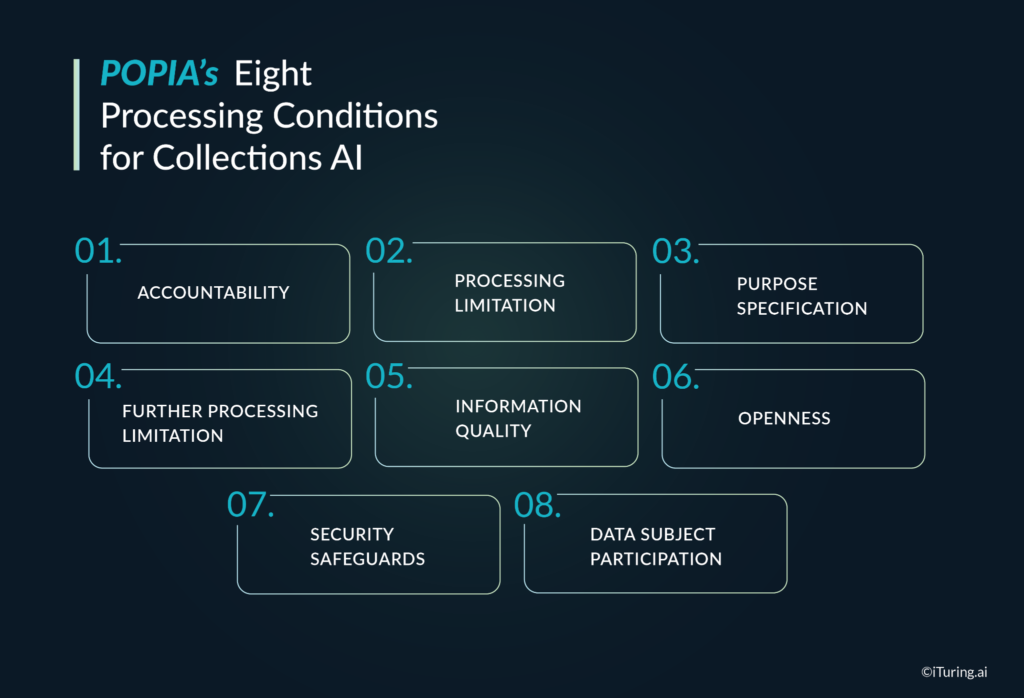

POPIA’s Eight Processing Conditions for Collections AI

POPIA’s eight conditions for lawful processing apply to every stage of an AI collections operation, from data collection through to model training, scoring, and communication. As confirmed by common POPIA compliance guidance, these eight conditions are:

- Accountability: The responsible party (the bank or financial institution) must ensure all conditions are complied with and must designate an Information Officer who is personally accountable.

- Processing limitation: Personal information may only be processed with the data subject’s consent, to carry out a contract, to comply with a legal obligation, to protect a legitimate interest of the data subject, or for the legitimate interests of the responsible party or a third party.

- Purpose specification: Information must be collected for a specific, explicitly defined, and lawful purpose. For collections AI, this means data collected for credit origination purposes must have collections use contemplated at the point of collection, or new purpose authorisation must be obtained.

- Further processing limitation: Information may not be processed further in a way incompatible with the original collection purpose. Training a collections propensity model on data originally collected for a different purpose requires a careful legal basis analysis.

- Information quality: The responsible party must take reasonable steps to ensure that personal information is complete, accurate, not misleading, and updated where necessary. For collections AI, an inaccurate input, a bureau error, or a payment that was made but not updated in the system can produce a wrong propensity score that triggers an unjustified collections action.

- Openness: Data subjects must be notified of what data is being processed, for what purpose, and by whom. For AI collections, this means the bank’s privacy notice must specifically contemplate automated processing for collections purposes.

- Security safeguards: Technical and organisational measures must prevent loss, damage, or unauthorised access to personal information. For AI collections platforms hosted by third-party vendors, this includes contractual data processing agreements and security audits.

- Data subject participation: Data subjects have the right to access their information, request correction, and object to processing. Collections operations must have workflows to handle these requests within the statutory timeframes.

Data governance for ai in a POPIA context is the practical implementation of these eight conditions: data inventories, purpose registers, minimal feature sets, retention schedules, and access controls tailored to AI workloads.

Collections-Specific POPIA Challenges

Three of POPIA’s eight conditions create challenges that are specific to AI collections operations and require deliberate design decisions.

Legal basis: consent does not work for collections

This is the most common early mistake. Some compliance teams, seeing that POPIA requires a lawful processing basis, reach for consent as the default. In collections, consent is not a workable legal basis. A borrower already in default cannot meaningfully consent to collections processing as a condition of being contacted, and consent that can be withdrawn at will cannot underpin an enforcement workflow.

The correct legal basis for collections data processing under POPIA is either legal obligation (where collections activity is required by prudential regulation or contract law) or legitimate interest (where the bank has a documented interest in recovering funds owed under a contract it has entered into with the data subject). Both require documentation in the bank’s data governance for ai register, mapping each data processing activity to the legal basis that supports it.

Minimality: not all data is legally available for collections AI

POPIA’s minimality requirement under Section 10 means that personal information processed must be adequate, relevant, and not excessive given the stated purpose. For a collections propensity model trained on twenty thousand features, this is a live compliance question. Which features are genuinely necessary for the collections purpose? Which can be justified under the minimality standard? A data governance for ai review of model features against the minimality requirement is not optional for a POPIA-compliant AI collections deployment.

Accuracy: errors in input data create legal exposure

If a data subject’s information is inaccurate and an AI model uses that inaccurate information to generate an unjustified collections decision, the bank has violated both the information quality condition and the data subject’s rights under Section 71. Bureau data errors, payment processing delays, and account reconciliation gaps all create scenarios where a technically correct model produces a legally incorrect decision because the input data was wrong. Automated data quality checks, bureau dispute workflows, and model input validation are therefore POPIA compliance requirements, not just data hygiene best practices.

FSCA TCF and the responsible AI framework overlay

POPIA sets the data protection floor. The FSCA’s Treating Customers Fairly (TCF) framework and the joint FSCA / Prudential Authority AI report add a conduct overlay that collections AI must also satisfy.connectontech.

The FSCA defines six TCF Outcomes; for collections operations, two are directly relevant:

- Outcome 1 requires that customers be confident they are dealing with firms where fair treatment is central to the corporate culture. An AI collections system that contacts customers aggressively, generates unexplained decisions, or fails to offer reasonable resolution paths creates TCF Outcome 1 exposure regardless of its technical accuracy.connectontech.

- Outcome 6 requires that customers face no unreasonable post-sale barriers to changing products, switching providers, making complaints, or making claims. In a collections context, an AI system that pushes customers quickly toward legal enforcement without accessible payment plan options, that makes it difficult to reach a human reviewer, or that processes complaints through automated-only channels creates exactly the kind of post-sale barrier Outcome 6 prohibits.

A responsible ai framework for collections in South Africa therefore combines POPIA’s rights and conditions with TCF’s customer outcome expectations. It embeds human-in-the-loop design for high-impact decisions, explanation workflows, fair treatment rules, and complaint-handling pathways into the AI design, not as a patch after deployment.connectontech.

Model governance and AI governance monitoring for collections

Within that responsible AI framework, model governance and ai governance monitoring are the two operational disciplines that keep AI collections on the right side of regulators.

Model governance

Model governance centralises the lifecycle control of every predictive and generative model used in collections:

- A model inventory that lists every propensity model, segmentation model, and language model, with owner, purpose, training data, and risk classification.

- Documented model development and validation, including performance metrics, bias checks, and stability analyses before go-live.

- Approval workflows that capture who authorised each model and under what conditions.

- Immutable lineage from inputs and retrieval sources through to model outputs and customer communications, so that specific decisions can be reconstructed for the Information Regulator or FSCA on demand.

AI governance monitoring

AI governance monitoring is the continuous oversight of how those models behave in production:

- Quantitative monitoring: tracking accuracy, drift, bias, and stability by segment, with alerts when thresholds are breached.

- Qualitative monitoring: sampling model outputs and generative messages for tone, fairness, and compliance, especially in high-risk collections use cases.connectontech.

- Incident management: logging and remediating AI-related incidents, such as incorrect scores due to input errors or inappropriate generative messages.

The joint FSCA / Prudential Authority AI report explicitly signals that regulators expect robust governance and monitoring around financial-sector AI, with TCF outcomes as the lens. For collections, this is where generative ai compliance lives day to day: in the dashboards, alerts, and audit logs that show AI is being watched and corrected.

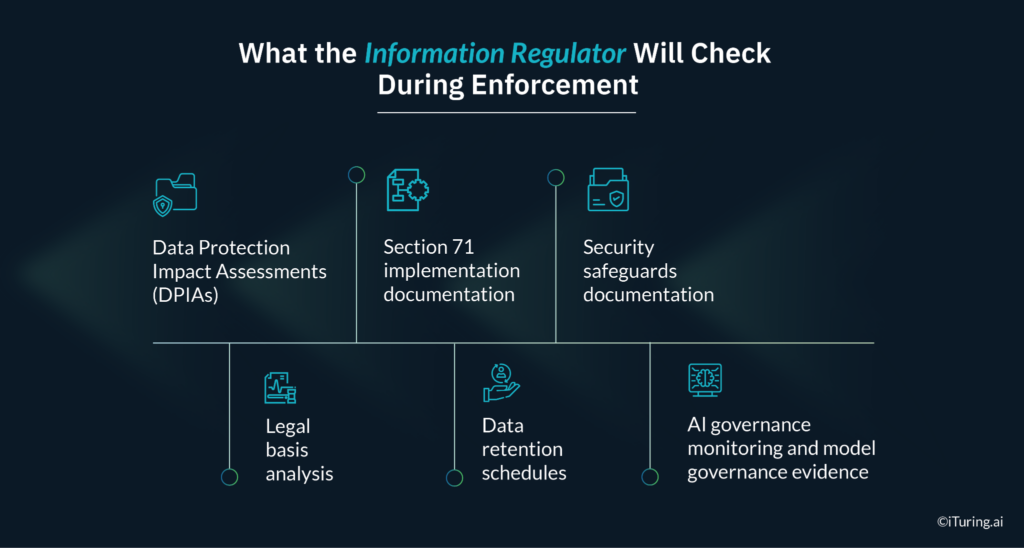

What the Information Regulator Will Check During Enforcement

South Africa’s Information Regulator has been progressively building its enforcement capacity since POPIA came into full effect. Enforcement inquiries related to financial services AI are increasing in frequency. When the Information Regulator examines an AI collections operation, the documentation checklist covers:

- Data Protection Impact Assessments (DPIAs): For processing that is likely to result in high risk to data subjects, which AI-driven collections decisions clearly are, a DPIA must be completed before the processing begins, documenting the nature of the processing, its purposes, the risks, and the mitigating measures.

- Legal basis analysis: A documented analysis mapping every data processing activity in the collections workflow to its lawful basis under POPIA. Undocumented processing has no defensible legal basis.

- Section 71 implementation documentation: How customers are notified that automated decision-making is taking place, how they can request human review, how explanation requests are fulfilled, and how the outcomes of human reviews are recorded and communicated.

- Data retention schedules: Personal information may not be retained beyond the period necessary for its stated purpose. Collections data retention schedules must be defined, documented, and enforced.

- Security safeguards documentation: Vendor agreements, penetration testing records, access control documentation, and breach response procedures must be current and available, including for any cloud or generative AI components.

- AI governance monitoring and model governance evidence: Examiners are increasingly asking for model inventories, validation reports, monitoring dashboards, and per-decision explanations, especially where profiling has triggered collections actions.

Generative ai compliance in this context means that generative models are not treated as “special” or “experimental” from a governance perspective. They sit inside the same model governance and ai governance monitoring framework and produce the same level of audit-ready evidence as any other production model.

How iTuring Addresses This

iTuring’s generative AI and collections platform is designed for regulated financial institutions, with generative ai compliance, model governance, and data governance for ai built into the architecture. Predictive, generative, and agentic AI operate on one governed data foundation, with immutable lineage from source data to retrieval to LLM output and final customer communication.

For South African banks and lenders:

- Every collections decision generated by the platform carries a SHAP-based explanation that can be converted into plain-language text for customers, addressing the “sufficient information about underlying logic” obligation directly.

- A human review workflow is embedded: when a customer challenges an automated collections decision, the request is routed to a qualified reviewer, the review is recorded, and the outcome is communicated to the customer within a defined timeframe.

- Model governance covers both predictive and generative components in a single inventory, with approvals, validation records, and change control captured for every version.

- Data governance for ai is supported through data inventories, lawful basis mapping, retention policies, and residency controls that align with POPIA’s localisation and transfer expectations.

On-premise and private cloud deployment options keep all POPIA-regulated data and generative workloads within controlled South African infrastructure or bank-owned VPCs, reducing cross-border transfer risk and simplifying POPIA compliance.

For compliance heads preparing for their first Information Regulator or FSCA / PA review of an AI collections deployment, iTuring offers a POPIA readiness assessment that benchmarks your current framework against the regulators’ documented enforcement expectations.

Regulatory Disclaimer

The information in this blog is provided for general informational purposes only and does not constitute legal, compliance, or regulatory advice. AI collections operations in South Africa are governed by the Protection of Personal Information Act 4 of 2013 (POPIA), the Financial Sector Conduct Authority’s Treating Customers Fairly framework, the National Credit Act 34 of 2005, the Debt Collectors Act 114 of 1998, and all applicable conduct standards issued by the FSCA. POPIA’s application to automated decision-making in financial services continues to be developed through Information Regulator enforcement practice and judicial interpretation. South African financial institutions should consult qualified legal counsel for advice on POPIA compliance in AI collections deployments. iTuring’s stated capabilities are based on platform design and client implementations; results may vary depending on institutional configuration and data environment.